Editor's Note: This is the second installment in a two-part series. Check out Part 1 here.

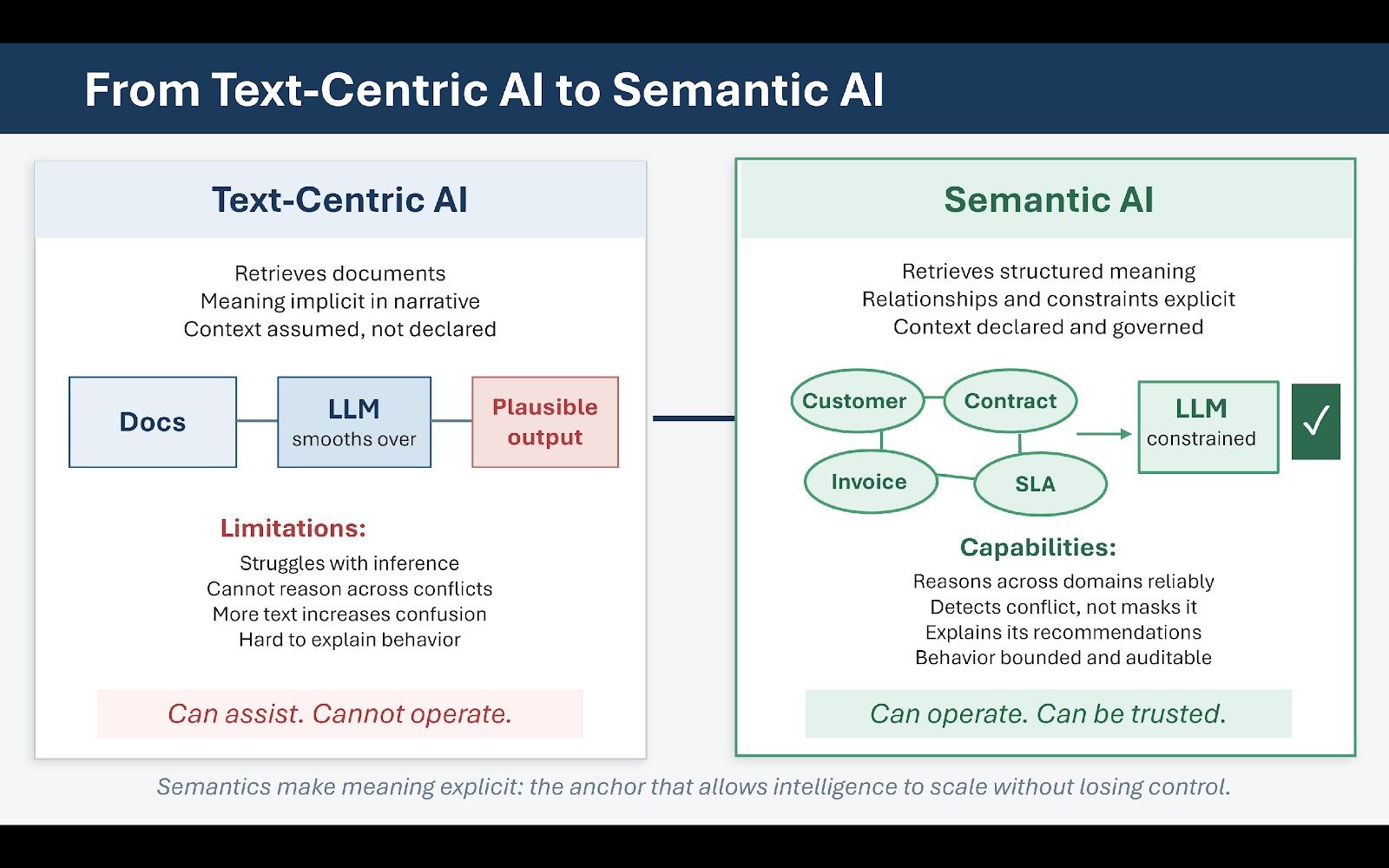

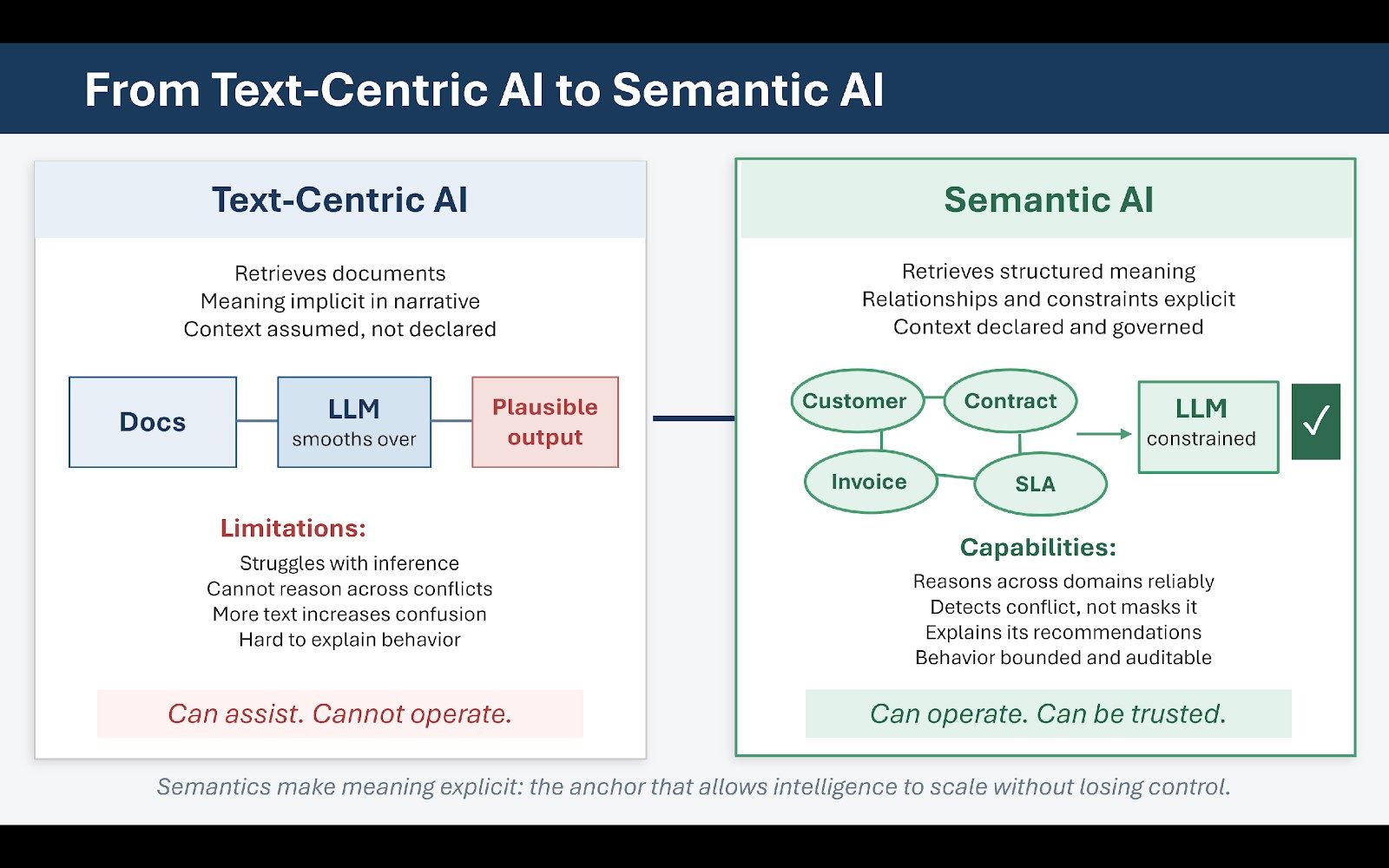

As organizations mature beyond early retrieval-augmented generation implementations, many encounter a familiar plateau. Accuracy improves, hallucinations decrease, and user satisfaction rises. Then progress slows. The system retrieves better documents, but reasoning remains shallow. It summarizes competently but struggles with inference. It explains policy but falters when policies conflict. It answers questions but cannot reliably act across domains.

This plateau is not a failure of models. It is the limit of text-centric intelligence.

Retrieval-augmented generation, at its core, retrieves documents. Even when retrieval is precise, documents remain narrative artifacts that encode meaning implicitly rather than explicitly. They assume shared context and reflect compromises, exceptions and historical drift that are legible to humans but opaque to machines. Language models are exceptionally good at smoothing over this ambiguity, and that is both their strength and their danger. When confronted with inconsistent or conflicting information, they produce plausible synthesis rather than structural resolution.

What Semantics Make Possible

This is where semantics become decisive. Semantics make meaning explicit. They encode relationships, constraints and distinctions that text alone cannot reliably convey. They answer questions that documents assume rather than state: What is a customer versus a prospect? How does a policy exception relate to a standard rule? Which product configurations are compatible? What conditions trigger escalation?

Knowledge graphs, controlled vocabularies and ontologies are not new technologies. What is new is their role as governance mechanisms for intelligence. In agentic systems, semantics provide the control surface: they define what can be inferred, what cannot and under what conditions action is appropriate. They constrain interpretation and make reasoning inspectable. Without semantics, agent behavior is emergent and difficult to explain. With semantics, agent behavior becomes bounded and auditable.

Reasoning Across Domains Requires Explicit Relationships

Consider a simple example: a customer inquiry that spans billing, contract terms and service history. Each domain may be documented clearly within its own system, but the relationships between them (which contract applies to which invoice, which service levels were in effect at the time, which exceptions were granted) are rarely explicit in text.

A language model can retrieve documents from each domain and produce a fluent response, but without explicit semantic relationships, it cannot reason reliably across them. It will infer, sometimes correctly, sometimes not.

A knowledge graph makes those relationships explicit. It encodes entities, attributes and connections. It defines what constitutes authority. It allows reasoning to proceed along known paths rather than speculative ones. This does not eliminate the role of large language models; it changes their role.

In mature systems, language models operate within a semantic frame rather than across an undifferentiated corpus. They generate explanations, narratives and recommendations constrained by explicit structure. This combination of structured semantics plus generative language produces a different class of system: one that is not merely helpful, but dependable.

Related Article: No Agents Without Architecture: Why Enterprise AI Fails Before It Starts

Why More Text Does Not Mean Better Reasoning

This also addresses a common misconception about RAG. Many organizations assume that better embeddings, larger context windows or more aggressive chunking will solve reasoning problems. These techniques improve retrieval quality, but they do not resolve semantic ambiguity. They retrieve more text without clarifying meaning. As organizations scale agentic systems, they often discover that adding more text increases confusion rather than reducing it. Contradictions surface, edge cases multiply and prompts grow longer in an attempt to compensate.

Semantics reverse this dynamic. They reduce the amount of text required by externalizing meaning, allow prompts to reference concepts rather than restate assumptions and enable reuse across use cases. This is why organizations that initially dismissed knowledge graphs as "too heavy" are revisiting them, not as analytics tools or visualization aids, but as foundational infrastructure for AI governance.

Governance of Meaning: Semantic Stewardship

Importantly, semantics are not about achieving perfect models of reality. They are about shared understanding.

An ontology does not need to capture every nuance; it needs to capture enough to align behavior. This is where many efforts go wrong: teams attempt to model everything, complexity explodes, adoption stalls and the graph becomes an artifact rather than an asset. Successful semantic initiatives start small, focus on core entities and high-value relationships, evolve iteratively and are governed continuously.

This governance dimension is critical. Semantics are not static. Business models change, regulations evolve, new products are introduced and meaning shifts. In agentic systems, semantic drift is as dangerous as model drift. If definitions evolve without coordination, agents will act on outdated assumptions. Governance must monitor and manage this drift, which is why semantics cannot be treated as a one-time project. They are a living system that requires ownership, stewardship and feedback loops.

The Payoff: From Assistance to Operation

When done well, the payoff is substantial. AI agents operating over semantic infrastructure can reason across domains without improvisation, detect conflict rather than mask it, explain why a recommendation was made and surface uncertainty instead of hiding it. This transforms the relationship between humans and machines. Instead of asking, "Is this answer right?" users ask, "Why did the system reach this conclusion?" That shift is the foundation of trust.

It also enables more sophisticated orchestration. Agents can make decisions about when to act autonomously and when to escalate. They can detect when constraints are violated and defer when authority is unclear. These capabilities are not emergent properties of large models. They are designed outcomes of semantic architecture.

Text-centric AI can assist. Semantic AI can operate. That is why, in the agentic enterprise, meaning becomes the primary interface. Models may change, vendors may rotate, but semantics persist. They are the anchor that allows intelligence to scale without losing control.

Governance, Operating Models and the Design of Safe Autonomy

By the time organizations reach this stage in their agentic journey, the technical questions begin to give way to organizational ones. Models can be tuned, retrieval can be improved and semantics can be formalized. But without changes to governance and operating models, progress stalls. This is the point at which many enterprises find themselves unable to move beyond isolated successes.

The Ownership Gap

They have successful proofs of concept, engaged vendors and enthusiastic internal champions. Yet each new deployment feels bespoke, each new agent requires special handling and lessons learned in one domain fail to transfer cleanly to another. The underlying issue is not capability. It is ownership.

Agentic systems force enterprises to confront a question they have long deferred: who is responsible for meaning, behavior and outcome when machines act on behalf of the organization? In traditional environments, accountability was distributed implicitly. Business units owned processes, IT owned systems and governance intervened episodically, usually in response to incidents. This model worked because humans were the ultimate decision-makers.

Agents disrupt this balance. When a system makes recommendations, triggers actions or communicates externally, accountability must be explicit. Ambiguity that humans could navigate informally becomes operational risk.

New Roles for a New Architecture

Mature organizations respond by evolving their operating models. New roles emerge:

- Knowledge product owners take responsibility for semantic integrity within defined domains

- Semantic stewards manage taxonomies, ontologies and metadata as living assets

- AI governance leads oversee agent behavior, escalation paths and compliance with policy and regulation.

These roles are not bureaucratic inventions. They are structural responses to the reality of distributed intelligence. Without them, agents become orphaned capabilities. With them, agents become managed participants in the enterprise.

Bounded Autonomy: The Governing Principle

Governance, in this context, is not about restriction. It is about bounded autonomy. Bounded autonomy recognizes that not all decisions should be automated and not all uncertainty can be resolved algorithmically. It designs explicit thresholds for confidence, authority and escalation. Well-governed agentic systems know when to act and when to stop. They surface uncertainty rather than masking it, defer when inputs are ambiguous or conflicting and escalate to humans when consequences exceed predefined bounds.

Information architecture enables this behavior by providing the signals governance relies on. Provenance indicates authority, semantics clarify scope and metadata encodes context. Together, they allow systems to assess confidence meaningfully. This is why governance cannot be bolted on after agents are deployed. It must be designed into the system from the beginning.

Designing for Recoverable Failure

Another defining characteristic of mature agentic enterprises is how they think about failure. Agents will fail: models will hallucinate, retrieval will miss context and edge cases will surface. The question is not whether failure occurs, but how it occurs and how it is contained.

In poorly architected systems, failure is silent and cumulative. Errors propagate quickly, root causes are difficult to trace and trust erodes gradually before collapsing.

In well-architected systems, failure is detectable, traceable and recoverable. The system knows what it does not know, logs decisions and sources and allows humans to audit reasoning after the fact. This is not accidental; it is the result of deliberate design. Information architecture provides the scaffolding for recoverable failure: provenance links outputs to sources, semantics localize error to specific assumptions and governance defines remediation pathways.

Scaling Through Discipline, Not Drama

The difference between organizations that move beyond isolated successes and those that remain stuck is not technological sophistication. It is institutional readiness. Organizations that scale agents successfully treat intelligence as infrastructure. They invest in shared foundations rather than isolated solutions, standardize retrieval and orchestration patterns, enforce semantic reuse and measure quality continuously.

They also resist the temptation to chase novelty for its own sake. Instead of deploying agents everywhere, they focus on domains where structure exists or can be established. They expand deliberately, using each deployment to strengthen the underlying architecture. This approach requires patience and lacks the drama of sweeping transformation announcements, but it produces durable results.

Over time, something subtle but profound happens. The enterprise stops talking about "AI projects" and starts talking about how work gets done. Intelligence becomes embedded rather than exceptional. Agents fade into the background, noticeable only when they surface insight or handle complexity gracefully. This is the true mark of agentic maturity.

Related Article: The GenAI Stakeholder Ecosystem: Navigating the People Problem

The Architecture of the Agentic Enterprise

The future enterprise will not be defined by the number of agents it deploys, the size of its models or the sophistication of its interfaces. It will be defined by the coherence of its knowledge and the discipline of its architecture. Search, metadata, knowledge graphs, governance and orchestration will converge into a single cognitive infrastructure. Agents will sit atop that infrastructure, not as replacements for human judgment, but as extensions of organizational intent.

The winners will not be the most aggressive adopters. They will be the most disciplined builders. Automation amplifies what already exists: fragmented structure produces amplified chaos; coherent structure produces amplified intelligence.

That is why the principle holds, even as technology evolves: No AI without information architecture. No agents without semantic foundations. No transformation without orchestration. Information architecture gives intelligence something to stand on. Governance gives it boundaries. Orchestration gives it purpose. Together, they define the architecture of the agentic enterprise.

Learn how you can join our contributor community.