A law firm uses AI to research case strategy for a particular client. An investment team queries a chatbot about someone’s portfolio risk. A physician consults an AI for differential diagnosis on a patient believed to have cancer. A board conducts confidential scenario planning through an enterprise AI platform. In each case, something protected is being exposed unintentionally. It is not malicious like through a security breach, but through the design architecture of the tool itself.

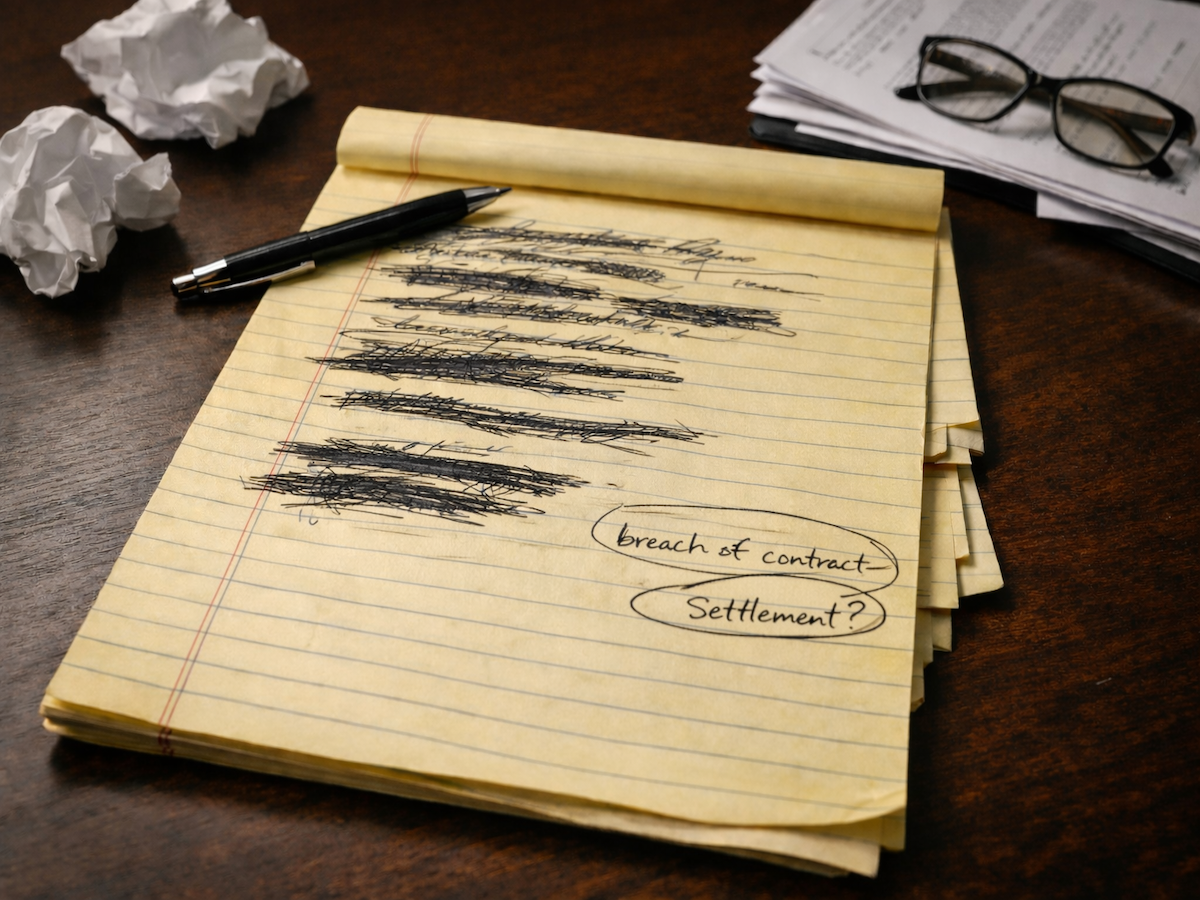

Think about writing a difficult email. You type a sentence, delete it, rephrase it, pause for twelve seconds, then delete the whole thing and start over. Traditional data privacy protects what you eventually send. But what about the drafts? The hesitation? The abandoned arguments?

Those intermediate states of thinking can now be tracked and analyzed as data points. And when that thinking happens through AI tools, it flows out of your organization and to external companies that now control that data.

This risk has already triggered massive corporate shifts. In 2023, Samsung banned the use of generative AI across its most sensitive divisions after discovering that engineers had unintentionally leaked proprietary source code and internal meeting notes by pasting them into a chatbot for debugging and summarization. The company realized that once that data was transmitted to external servers, it was effectively out of their control. Now it was permanently stored and could potentially be used to train the very models their competitors might use in the future.

Table of Contents

- The Nouns vs. The Verbs

- Cognitive Biometrics

- Where AI Introduces Vulnerabilities

- The 'Rough Draft' Problem

- What Fiduciary-Grade AI Requires

- The Procurement Question

The Nouns vs. The Verbs

Current data protection focuses on static nouns (your name, your diagnosis, your credit card number). If someone steals the list, you have a breach. You cancel the card. You contain the damage.

But cognitive process data isn't about items. It's about verbs (the intermediate states of analysis, of thoughts and inferred feelings). Suppose a lawyer drafts three arguments before settling on one. Those abandoned strategies actually reveal more about his reasoning patterns. Similarly, if a doctor pauses for ten seconds before typing the word "cancer," that hesitation is captured as behavioral data. It reveals her uncertainty, her intuition and her level of concern.

This isn't a security flaw or a "leak" in the traditional sense. It is simply how the technology is designed to work. The tool doesn't just want your final answer. The more valuable information comes by observing the way you think.

Related Article: AI Isn't Actually Intelligent: Why We Need a Reality Check

Cognitive Biometrics

The risk is moving beyond just "data" and into the realm of our internal lives. A 2024 paper in Neuron introduced the term "cognitive biometrics," referring to data that can infer a person's mental state (such as their focus, stress or intent) from simple behavioral and physiological signals. Researchers analyzed the privacy policies of seventeen major technology companies and found a startling lack of protection for biometric tracking. Only Apple explicitly encrypts biometric data in a way that prevents even the company’s own employees from accessing it.

Similarly, a 2024 white paper from the Neurorights Foundation reviewed thirty neurotechnology companies and found that nearly all of them retained broad rights to the neural data they collected, often with vague or non-existent storage standards.

Suppose a litigator is using an AI-powered research tool to prepare for a trial. She drafts a prompt outlining a "Plan B" strategy that relies on a controversial interpretation of a statute. She deletes it because she decides it’s too risky, and moves to a more conservative argument. Her final product is protected under current data laws. But the AI-tool functions by observing her pathways of thinking, not just her final decision. The external company now knows not only what the firm will argue in court, but also the specific vulnerabilities they were afraid to expose.

Where AI Introduces Vulnerabilities

If your enterprise AI vendor follows these industry-standard policies, your team's sensitive analytical processes and "private" drafts are likely far less protected than you assume.

| Relationship | What Law Protects | What AI Observes |

|---|---|---|

| Attorney-Client | Communications | Research queries, draft arguments, abandoned strategies |

| Physician-Patient | Medical records | Diagnostic reasoning, differential considerations, uncertainty |

| Financial Advisor | Investment decisions | Analysis methodology, risk assessment process |

| Corporate Board | Deliberations | Strategic scenarios, competitive assessments, deal modeling |

The communications are protected. The cognitive processes that produced them (now externalized through AI) may not be.

The 'Rough Draft' Problem

The rough draft is where your real intent lives. The polished final version is performance. The messy thinking underneath is how we think. When you are drafting an idea and cross out sentences, abandon thought or express uncertainty, those moments of disclarity become valuable behavioral data.

When professionals use AI for analytical work, they're giving external systems access to their rough drafts. This is different from the conclusions they're willing to defend because it's the reasoning they're still working through.

A law firm might protect final client communications meticulously while letting strategic brainstorming flow through an AI tool with unclear data retention policies. The confidentiality apparatus protects the wrong layer.

Related Article: 7 Reasons Every Company Needs an AI Use Policy

What Fiduciary-Grade AI Requires

Enterprises operating in fiduciary contexts need AI infrastructure built on different principles that protect the drafting phase:

- Edge processing. Analytical queries should be processed on device or on premises rather than transmitted to vendor servers. When cognitive process data doesn't leave your environment, it can't be aggregated into external profiles.

- Ephemeral processing. Input data must not persist beyond the session and must never become training data. The data should exist only in volatile memory (like RAM) for the fraction of a second needed to perform the task. When the session ends, it vanishes. No logs, no history, no training set.

- Zero-knowledge architecture. End-to-end encryption should ensure that even the vendor cannot access query content. If your AI provider can read your team's analytical processes, so can anyone who compromises or compels that provider.

- Contractual guarantees. Pay a premium for what Nita Farahany, in her book "The Battle for Your Brain," calls "zero-training architecture." It’s a legal guarantee that not a single byte of your input will train, retrain or improve the model. Ever.

The Procurement Question

Much of enterprise AI procurement evaluates capability, security and compliance. Few evaluate cognitive privacy.

Here’s some questions to ask vendors:

- Does user input become training data? Under what circumstances?

- Where is query processing performed? On-device, on-premises or in vendor cloud?

- What is the data retention policy for analytical sessions?

- Can vendor employees access query content?

- What happens to cognitive process data if the vendor is acquired, subpoenaed or breached?

If the vendor cannot answer these questions clearly, your fiduciary exposure may be undefined.

Remember that your AI tools don't just assist with thinking. They also observe the thinking. In fiduciary contexts such as legal, medical, financial and board-level, that observation may constitute exposure that current compliance frameworks don't address. Never give the algorithm the rough draft. That's where the real liability lives.

Learn how you can join our contributor community.