Artificial intelligence may already outperform humans in narrow domains, but its path to ubiquity is being slowed by two constraints: high latency and major infrastructure costs.

Taalas claims it has a solution.

The company recently unveiled its first product, a hard-wired implementation of Llama 3.1 8B, delivered as both a chatbot demo and an inference API service. The startup says its silicon version dramatically reduces latency, cost and power consumption compared to conventional AI hardware.

Table of Contents

- The 2 Barriers Holding AI Back

- From Model to Silicon in 2 Months

- The Hard-Wired Llama: Performance Comparison

- What’s Next for Taalas

The 2 Barriers Holding AI Back

AI’s promise is clear. In many focused applications, models already exceed human performance, and experts predict the technology will lead to a major reduction in workforces by 2030. When used well, AI serves as an amplifier of human productivity and creativity. But two issues currently limit adoption:

1. Latency

Interactions with large language models often lag behind human cognition.

- Coding assistants may pause for minutes

- Developers lose their state of flow

- Agentic applications demand millisecond responses — not human-paced replies

2. Cost

AI has a voracious appetite for resources like energy, water and land. Modern AI deployments require:

- Room-sized supercomputers

- Hundreds of kilowatts of power

- Liquid cooling systems

- Advanced packaging and stacked memory

- Massive I/O bandwidth and miles of cables

Scaling these systems means building sprawling data center campuses and absorbing extreme operational expenses.

Related Article: 13 AI Chip Companies You Should Know About

From Model to Silicon in 2 Months

"Though society seems poised to build a dystopian future defined by data centers and adjacent power plants, history hints at a different direction. Past technological revolutions often started with grotesque prototypes, only to be eclipsed by breakthroughs yielding more practical outcomes."

- Ljubisa Bajic

Co-Founder & CEO, Taalas

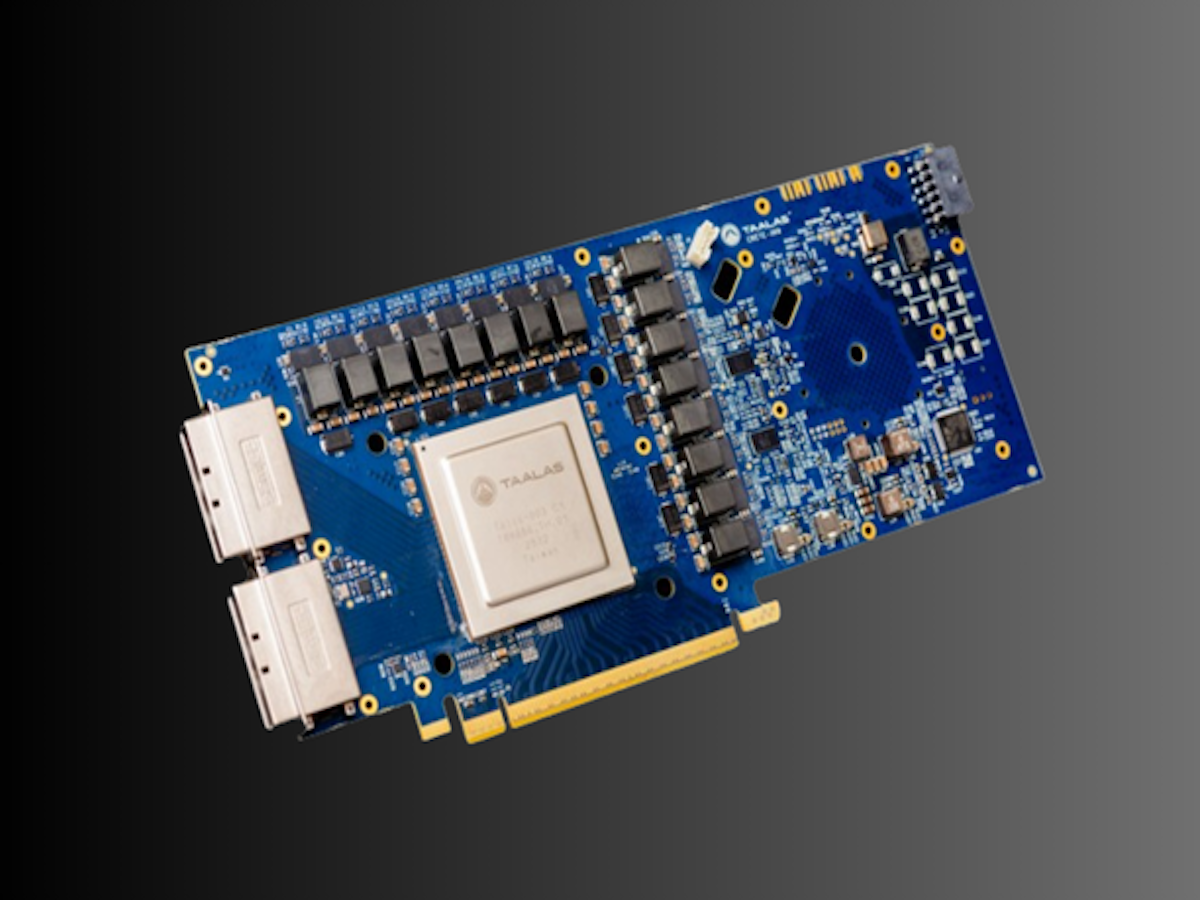

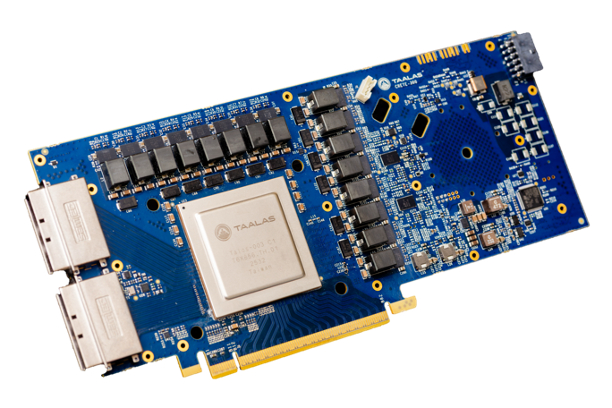

Founded roughly 2.5 years ago, Taalas developed a platform that transforms any AI model into custom silicon in about two months after receiving it. The resulting hardware implementations, which the company calls Hardcore Models, are designed specifically for a single neural network.

According to Taalas, compared to traditional software-based inference, these systems are:

- ~10X faster

- ~20X cheaper to build

- ~10X lower power

Taalas’ approach rests on three core principles:

1. Total Specialization

Rather than building general-purpose AI accelerators, the company creates model-specific silicon. AI inference, it argues, is the most critical computational workload in existence, and the one that benefits most from deep specialization.

2. Merging Storage and Computation

Modern AI hardware separates memory (dense, cheap DRAM) and compute (on-chip logic). This divide introduces severe inefficiencies:

| Constraint | Impact |

|---|---|

| Off-chip DRAM access | Thousands of times slower than on-chip memory |

| Memory-compute separation | Requires advanced packaging |

| High-bandwidth memory (HBM) | Increases complexity and cost |

| Massive I/O bandwidth | Drives power consumption |

| Liquid cooling | Adds infrastructure burden |

According to Taalas, it eliminates this boundary by unifying storage and computation on a single chip at DRAM-level density.

3. Radical Simplification

By removing the memory-compute divide and tailoring silicon to each model, Taalas redesigned its hardware stack from first principles. Its architecture does not rely on HBM, advanced packaging, 3D stacking, liquid cooling or high-speed I/O fabrics.

This engineering simplicity enables an order-of-magnitude reduction in total system cost, the company claims.

The Hard-Wired Llama: Performance Comparison

Taalas’ first public product is a hardened version of Llama 3.1 8B. The company selected the model for its small footprint, open-source availability and minimal logistical overhead. And while optimized for speed, the chip retains flexibility through:

- Configurable context window size

- Support for fine-tuning via low-rank adapters (LoRAs)

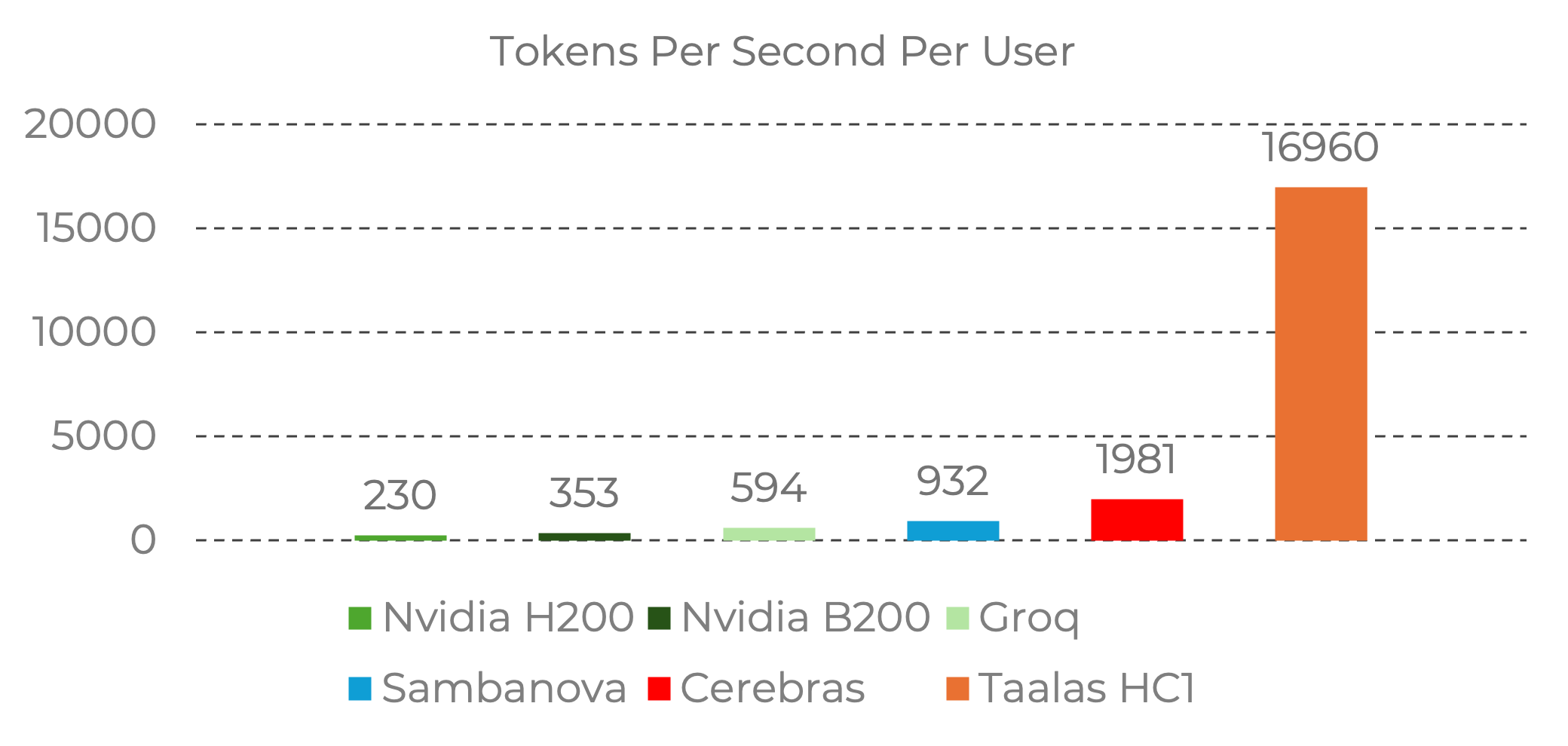

Taalas reports the following tokens per second per user:

According to the company, its silicon Llama achieves nearly 10X the speed of the current state of the art, while costing 20X less to build and consuming 10X less power.

At the time development began on Taalas's first-generation silicon platform, low-precision formats were not standardized. As a result, some quality degradation exists relative to GPU benchmarks. The company’s second-generation silicon will reportedly adopt standardized 4-bit floating-point formats to address these limitations while maintaining efficiency.

Related Article: The End of Moore’s Law? AI Chipmakers Say It’s Already Happened

What’s Next for Taalas

Taalas has additional systems planned:

- Spring: Mid-sized reasoning LLM (HC1 platform)

- Winter: Frontier-scale LLM using second-generation HC2 silicon promising higher density and faster execution

The company plans to release systems early, iterate openly and allow developers to experiment with ultra-low-latency inference.

Developers can now apply for access to the platform to test what can be built when intelligence becomes effectively instantaneous.