Anthropic researcher Prithvi Rajasekaran has spent months trying to solve two of the hardest problems in AI-assisted software development: getting AI to produce genuinely good design, and getting it to build a complete application from a single prompt — without anyone holding its hand.

The result, detailed in a new post from Anthropic's Labs team, is a three-agent architecture that can autonomously plan, build and quality-test full-stack software over multi-hour coding sessions, catching real bugs along the way.

Table of Contents

- The Core Problem: AI Is Too Nice to Itself

- The Three-Agent System

- Making Taste Gradable

- Head-to-Head: Solo vs. Harness

- Where This Is Headed

The Core Problem: AI Is Too Nice to Itself

The central insight driving this work is deceptively simple: AI models are poor critics of their own output.

"When asked to evaluate work they've produced, agents tend to respond by confidently praising the work — even when, to a human observer, the quality is obviously mediocre," Rajasekaran wrote.

This is especially problematic in design, where there's no binary pass/fail test. But even in code, where correctness is theoretically verifiable, AI agents tend to wave through their own errors.

The solution Rajasekaran landed on draws inspiration from Generative Adversarial Networks (GANs), a machine learning architecture in which two models compete: one generating outputs, the other trying to distinguish real from fake. Applying that adversarial logic to software development, he separated the role of building from the role of judging.

Related Article: Anthropic CEO Accuses OpenAI of 'Safety Theater' in Pentagon AI Deal

The Three-Agent System

The final architecture is made up of three specialized agents:

| Agent | Role |

|---|---|

| Planner | Expands a 1-4 sentence prompt into a full product spec |

| Generator | Builds the application, one feature at a time |

| Evaluator | Tests the live running app and grades each sprint |

The Planner was added specifically to address a gap in earlier versions of the harness: when given a raw prompt, models would underscope the task — starting to build before fully thinking through what they were making, and ending up with thinner, less-featured apps.

The Generator builds in structured sprints. Before writing any code, it negotiates a "sprint contract" with the Evaluator — agreeing on what "done" looks like before a single line is written.

The Evaluator is where the real innovation lies. Rather than reviewing static code, it uses the Playwright browser automation tool to interact with the live running application, clicking through features, testing API endpoints and probing database states the same way a human QA engineer would.

Making Taste Gradable

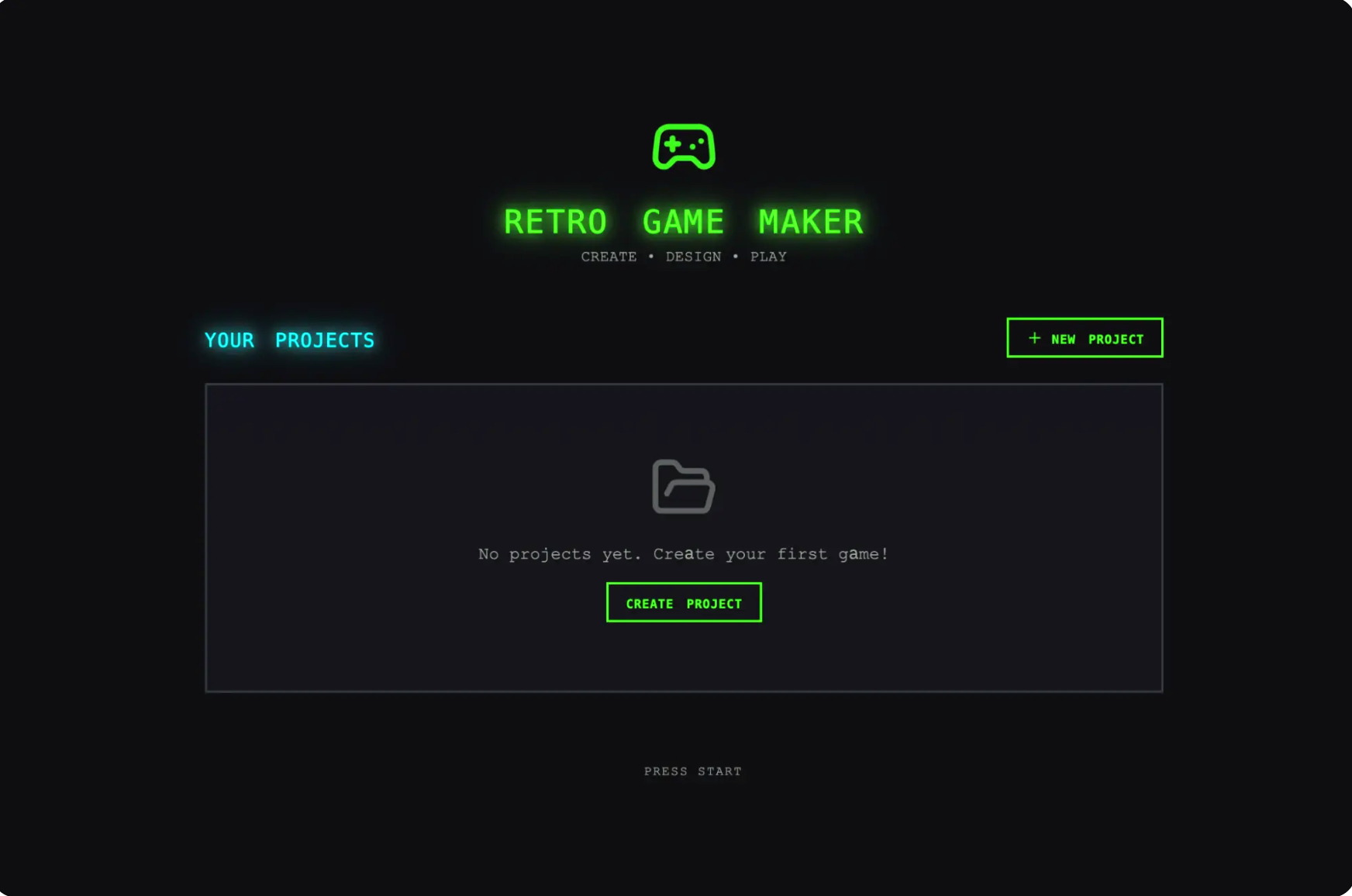

Before tackling code, Rajasekaran first tested the generator-evaluator loop on frontend design, where the self-evaluation problem was starkest. Out of the box, Claude defaults to what he calls "safe, predictable layouts" — functional, but visually unremarkable.

His solution was to formalize design quality into four graded criteria:

- Design Quality — Does the interface feel like a coherent whole?

- Originality — Are there deliberate creative choices, or just AI defaults?

- Craft — Technical fundamentals: spacing, typography, contrast ratios

- Functionality — Can users actually use it?

The criteria explicitly penalized what Rajasekaran calls "AI slop," the telltale purple gradients, unmodified stock components and generic patterns that emerge from models defaulting to their training distribution.

One striking result came from a Dutch art museum prompt. After nine iterations of refinement, the generator scrapped its conventional dark-themed landing page and reimagined the site as a 3D spatial experience — a CSS-perspective room with artwork hung on virtual walls and doorway-based navigation between gallery rooms. "It was the kind of creative leap that I hadn't seen before from a single-pass generation," Rajasekaran wrote.

Head-to-Head: Solo vs. Harness

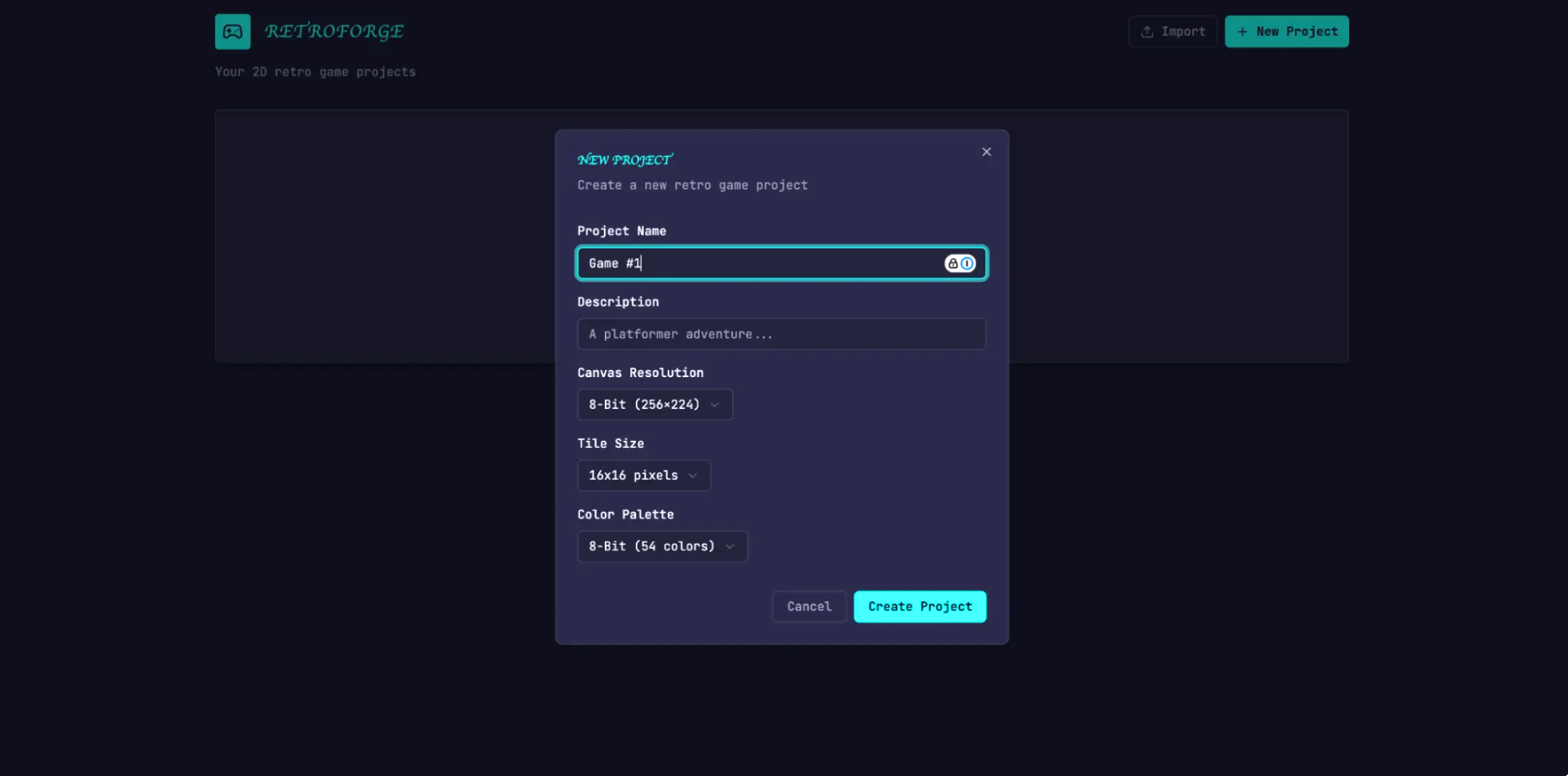

The architecture was put to a direct test with this prompt:

"Create a 2D retro game maker with features including a level editor, sprite editor, entity behaviors and a playable test mode."

The results:

| Approach | Duration | Cost |

|---|---|---|

| Solo agent | 20 min | $9 |

| Full harness | 6 hours | $200 |

The solo run produced an app that looked functional until you tried to play it. The game was broken: entities appeared on screen but nothing responded to input. The wiring between entity definitions and the game runtime had silently failed.

The harness version was playable. Physics had rough edges, but the core loop worked. The evaluator had caught dozens of real bugs along the way, including a FastAPI routing error where a route defined in the wrong order caused the server to try parsing the string "reorder" as an integer.

Related Article: Anthropic Launches Multi-Agent Code Review for Claude Code

Where This Is Headed

As models improve, the harness is expected to get simpler, not more complex. Moving from Claude Sonnet 4.5 to Opus 4.6 already allowed Rajasekaran to remove the sprint decomposition entirely, since the newer model could sustain coherent work across a two-hour build without it.

But Rajasekaran argued that the space of useful harness designs doesn't shrink as models get better. It moves.

"The interesting work for AI engineers is to keep finding the next novel combination," he wrote.