OpenAI is updating its privacy policy to reflect the introduction of advertising in ChatGPT and to provide greater transparency into how user data is collected, processed and controlled.

Under the revised policy, ads may appear on ChatGPT’s Free and Go plans but will remain absent from Plus, Pro, Enterprise, Business and Education subscriptions (for now). OpenAI says advertisers will not have access to users’ chat history, personal details or conversations. Instead, ad targeting will rely on contextual signals generated within ChatGPT itself, such as user interactions with ads or general usage patterns.

OpenAI’s privacy policy revisions also clarify how the company processes and retains data, including the legal bases it relies on for data processing.

Table of Contents

- How Will ChatGPT Ads Affect Users?

- The Privacy Risk for Users — Despite OpenAI's Assurances

- Ads Arrive as AI Firms Seek New Revenue Streams

- With or Without Ads, ChatGPT Risk Remains High

- The Steps Companies Must Take to Protect Themselves

How Will ChatGPT Ads Affect Users?

Users can manage their data preferences and personalization settings through their account dashboard, according to OpenAI, giving them more granular control over how their data is used.

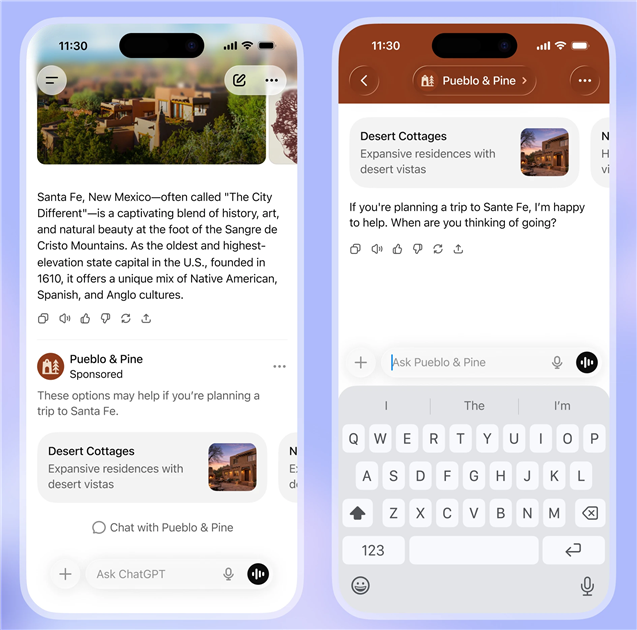

The policy also claims ads will be clearly labeled as sponsored content and visually separated from ChatGPT responses. Ads will reportedly not influence answers.

Advertisers will only receive aggregated performance data, such as views and clicks, rather than access to individual user interactions.

FAQs on ChatGPT Ads and User Privacy

According to OpenAI, they collect:

- Identifiers (name, contact details, IP address, etc.)

- Commercial information (transaction history)

- Network activity (log data, usage data, devices used, etc.)

- Content (your prompts and content you upload to ChatGPT)

- Communication information (email, or connected device contacts)

- Geolocation data (general location, IP address, or precise location)

- Account information (credentials, payment information, date of birth, profile picture)

- Other information provided (information from event participation, surveys, vendors)

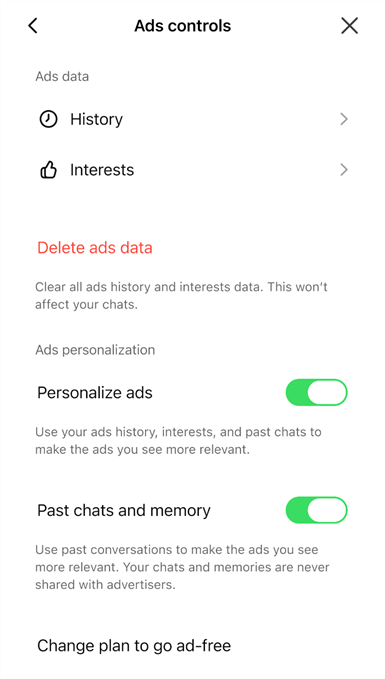

Users can customize controls, including opting into or out of personalized ads, by going to Settings > Ad Controls or by visiting this link.

Within those settings, you can:

- Turn of ad personalization

- Dismiss ads and share feedback

- See why you're being shown a specific ad

- Clear data used for ads

According to OpenAI, if you opt out of ad personalization, you will still see ads based on the context of your current chat thread, but they won't use other chat threads, ads history or interests to inform content.

Privacy rights depend heavily on your location, with some locations (such as the EU, or California) having stricter protections. You may have the right to:

- Get information on how OpenAI processes your personal data — including access to the personal data OpenAI has on file

- Request the deletion of your personal data

- Correct your personal data

- Face no retaliation when exercising your privacy rights

To exercise the rights above, you must submit a request through privacy.openai.com or email [email protected].

The Privacy Risk for Users — Despite OpenAI's Assurances

Despite OpenAI's assurances, the introduction of advertising alters the privacy equation for consumers and enterprises alike, says Evelyn Mitchell-Wolf, senior analyst at Forrester.

“The introduction of advertising into ChatGPT certainly changes the privacy risk for any individual using the free or Go product tiers. Even if conversations are not shared with advertisers, the ad serving process will inherently require some level of contextual analysis to determine relevance.”

That contextual analysis introduces new considerations around data flows, retention and how user interactions may indirectly inform ad targeting —even without direct data sharing.

OpenAI’s claims about isolating advertiser access may not fully address concerns among technically sophisticated users, Mitchell-Wolf added. “After all, OpenAI has shared its intention to expand ads ‘responsibly’ into ‘sensitive or regulated topics like health, mental health or politics,’ so the company does plan on leveraging sensitive data even if it’s not shared directly with advertisers.”

Related Article: OpenAI to Test Ads in ChatGPT Free Tier

Ads Arrive as AI Firms Seek New Revenue Streams

The addition of advertising arrives as the economics of GenAI are under increasing scrutiny. Training and operating large language models (LLMs) require enormous computational resources; advertising represents a familiar monetization strategy for scaling consumer-facing technology platforms.

“This move confirms what we’ve long suspected: subscriptions alone are not sufficient to support the cost structure of large-scale LLMs,” said Mitchell-Wolf. “Over time, we’ll see a bifurcation: ad-supported AI for broad consumer use and higher-cost, tightly governed models for enterprise and regulated environments.”

The shift could also reshape competition across the AI industry, potentially favoring providers with established advertising infrastructure and large consumer reach. As Mitchell-Wolf noted, “Advertising introduces a competitive dynamic that favors providers with massive consumer reach and strong ad infrastructure — such as Google."

With or Without Ads, ChatGPT Risk Remains High

From a privacy standpoint, advertising does not necessarily introduce new risks, said Frank Dickson, GVP for security and trust at IDC. But it does reinforce existing concerns about sending sensitive information to public AI systems.

“The privacy risk of ChatGPT is substantial; the introduction of advertising does not necessarily change that,” he explained. “You have the same very substantial risk.”

Highly relevant advertising can be generated using minimal information, he emphasized, especially given the deeply personal nature of user queries. “Given the intimacy of the queries, the query alone is enough for present highly relevant advertising, even without the capture of personal data or the sharing of the information with advertisers."

Related Article: 7 Reasons Every Company Needs an AI Use Policy

The Steps Companies Must Take to Protect Themselves

For enterprises, particularly those operating in regulated industries such as healthcare, finance or government, the introduction of advertising may reinforce the importance of strict governance controls around GenAI use.

“The only way to ensure the prompts, proprietary data and sensitive business information are not used for ad targeting or model training is to not send the data to a third party cloud based LLM in the first place,” Dickson said, adding that for organizations to minimize risk, they need to establish a proprietary GenAI instance, preferably on-premises.

Even without advertising, many enterprises have already established policies limiting employee use of public AI tools for sensitive work — policies Dickson said should remain in force regardless of OpenAI’s monetization model. The primary privacy risks don't stem from advertising alone, he emphasized, but rather from the fundamental architecture of public AI systems.

“Critical or sensitive data should never be uploaded to public models,” said Dickson. “Nothing should change except the strength of the resolve to protect sensitive, private and critical organization data from being sent to public models and applications.”