When AI gives a wrong answer, what happens next?

If the answer is "nothing" or "eventually someone notices," your governance is broken. If the answer is "we lock everything down until we are sure it is perfect," your governance is also broken, in the opposite direction.

Part 1 and Part 2 of this series established that enterprise AI complexity grows exponentially with scale and that information architecture provides the retrieval foundation. This is the third pillar: governance designed for iteration rather than prevention. Without it, even well-architected content degrades over time, and the AI system your organization invested in quietly becomes operationally useless.

Table of Contents

- Why Traditional Governance Fails for AI

- The 3-Layer Governance Stack

- The Feedback Loop: The Heart of AI Governance

- Risk Stratification: Applying Governance Proportionally

- What Governance Failure Looks Like

- Governance as Accelerator

- The Governance Question That Matters

Why Traditional Governance Fails for AI

Traditional content governance was designed for a world where humans created content, humans reviewed content and humans published content. Every step was manual. Control meant preventing mistakes before they happened. Quality meant perfection at launch.

That model does not work for AI. AI will make mistakes. It will hallucinate. It will retrieve outdated content. It will miss context that humans would catch. No amount of pre-launch review will prevent every error. The question is not whether AI will be wrong. It is how quickly you can detect and correct those errors.

The organizations that succeed with GenAI do not prevent all mistakes. They catch and fix mistakes faster than anyone else.

The mindset shift is profound, analogous to moving from gatekeeping to gardening. Traditional governance builds gates: content is reviewed, approved and posted. AI-era governance cultivates a garden: you plant, you monitor, you prune, you refine. The system is never "done." It is always improving.

Related Article: The 5-Level Content Operations Maturity Model: Where Are You on the Path to AI-Ready?

The 3-Layer Governance Stack

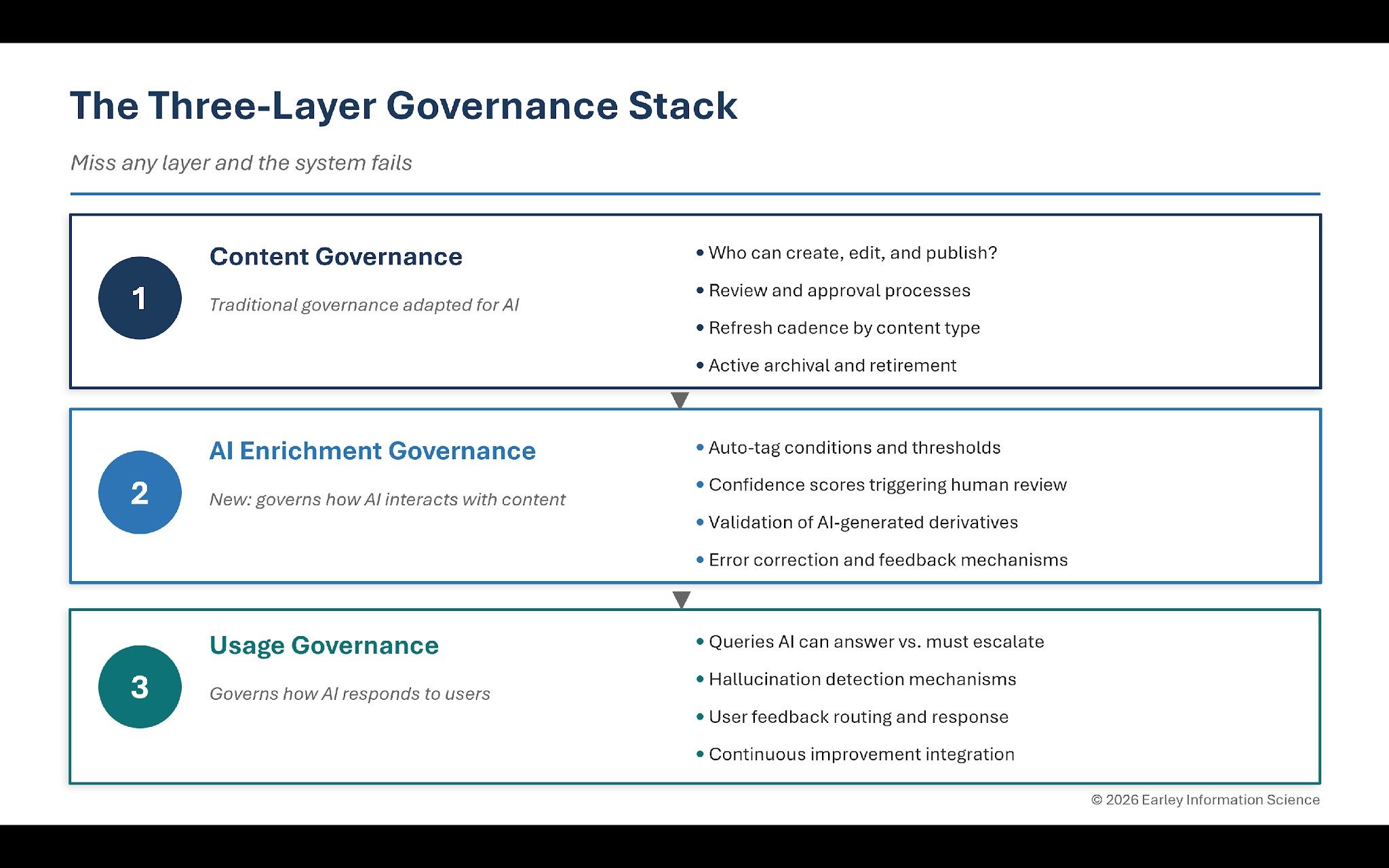

Effective AI governance operates at three distinct layers. Miss any one of them and the system fails.

Layer 1: Content Governance

This is traditional governance adapted for AI context. It addresses four questions:

- Who can create, edit and publish content? Not everyone should modify the knowledge base your AI draws from. But the approval process cannot be so burdensome that content goes stale waiting for sign-off.

- What is the review and approval process? High-stakes content (compliance, customer-facing, safety-related) needs human review. Routine content can auto-publish with spot-check monitoring. The governance framework defines which is which.

- How often does content need to be refreshed? Different content types have different shelf lives. Product specifications might need quarterly review. Policy documents might need annual review. The cadence should be explicit, not assumed.

- What happens to outdated content? This is where most organizations fail. Old content does not disappear. It sits in the knowledge base, waiting to be retrieved by AI and served to users as if it were current. Active archival and retirement processes are not optional.

Layer 2: AI Enrichment Governance

This layer is new. It governs how AI interacts with content and requires decisions that most governance frameworks have never addressed.

- Can AI auto-tag content, and under what conditions? For some content types and metadata fields, AI can tag autonomously. For others, AI suggests and humans approve. The governance framework draws the line.

- What confidence threshold triggers human review? AI enrichment provides confidence scores. Below a defined threshold, content should be flagged for human validation rather than auto-published. Where you set that threshold is a governance decision with direct accuracy implications.

- Who validates AI-generated derivatives? If AI generates summaries, FAQs or synthesis documents from source content, who ensures accuracy? This requires explicit ownership and review processes that did not exist in pre-AI governance models.

- How are AI mistakes fed back into improvement? When AI tags incorrectly or classifies wrongly, the escalation path, the correction process and the mechanism for feeding corrections into future enrichment all need to be defined.

Layer 3: Usage Governance

This layer governs how AI responds to users and closes the loop between system output and system improvement.

- What queries can AI answer versus escalate? Some questions should never receive an AI-generated answer without human validation: legal advice, medical guidance, safety-critical decisions. Governance defines the boundaries.

- How are hallucinations detected? AI can generate plausible but fabricated information. You need mechanisms to detect when this happens: user feedback, automated consistency checking, sampling and review.

- How does user feedback flow into improvement? Thumbs up/down signals, support escalations and abandonment patterns all contain information about AI quality. Governance defines how that feedback routes into content correction, metadata refinement and model improvement.

The Feedback Loop: The Heart of AI Governance

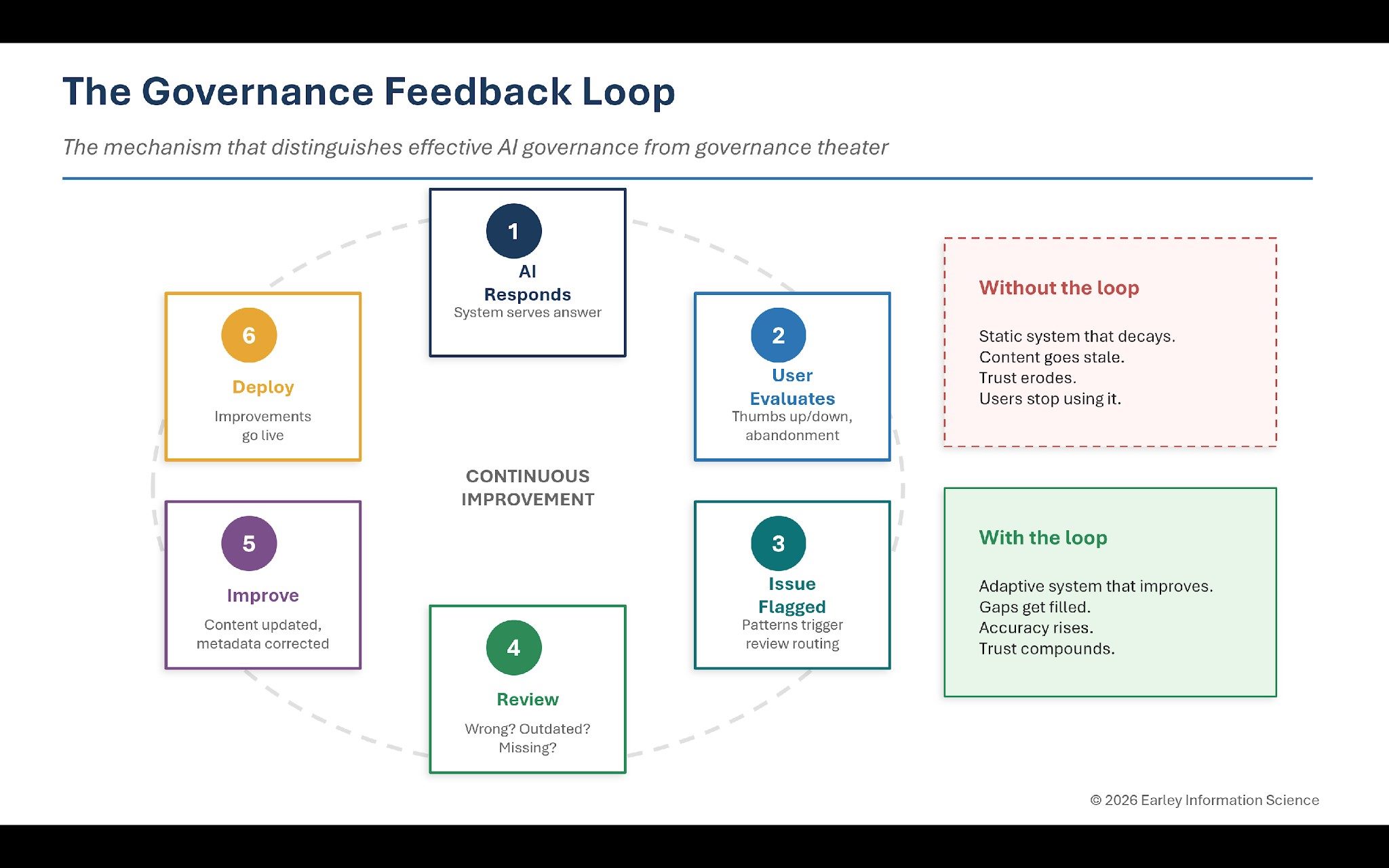

If there is one mechanism that distinguishes effective AI governance from governance theater, it is the feedback loop.

The cycle operates in six stages:

- AI responds: The system serves an answer to a user query.

- User evaluates: The user indicates whether the response was helpful, sometimes explicitly (thumbs up or down) and sometimes implicitly (abandoning the interaction and calling support instead).

- Issue flagged: Problems are identified and routed. Not every negative signal triggers review, but patterns do.

- Review: A human examines the issue. Was the content wrong? Was it outdated? Did AI misinterpret the query? Was content missing entirely?

- Improve: Based on diagnosis, something changes. Content gets updated. Metadata gets corrected. The taxonomy gets refined. Enrichment thresholds get adjusted.

- Deploy: Improvements go live. The cycle repeats.

Without this loop, you have a static system that decays. Content goes stale. AI makes the same mistakes repeatedly. User trust erodes. Eventually people stop using the system and the investment yields nothing.

With this loop, the system becomes adaptive. Coverage gaps get filled. AI accuracy improves. User trust grows. Usage increases, which generates more feedback, which drives more improvement.

The ultimate governance goal: enable AI to improve content quality faster than content decays.

Risk Stratification: Applying Governance Proportionally

Not all content warrants the same governance intensity. The organizations that sustain quality at scale stratify their governance by risk level.

- High risk (customer-facing, compliance-sensitive, safety-related): human review required, strict controls, high confidence thresholds for AI enrichment, frequent refresh cycles.

- Medium risk (internal operations, standard procedures): spot-check monitoring, automated enrichment with human oversight, periodic rather than continuous review.

- Low risk (routine information, internal reference): auto-publish with feedback monitoring, automated enrichment, review triggered by user signals rather than by schedule.

This stratification is what makes governance scalable. Applying high-risk controls to every piece of content creates the bottleneck that causes content to go stale. Applying low-risk controls to compliance content creates liability. The governance framework must distinguish between the two and apply intensity proportionally.

What Governance Failure Looks Like

Organizations with broken governance exhibit predictable patterns, and recognizing them early is the best way to intervene before user trust erodes.

- The Bottleneck: One team controls all content changes. A queue builds up. Content updates take months while the content goes stale waiting for approval. Users encounter outdated information and lose confidence. The governance process is technically in place but practically useless.

- The Free-for-All: Anyone can change anything. No formal review process exists. AI retrieves conflicting information because multiple versions of the same content coexist without authority ranking. Users get different answers to the same question.

- The Zombie System: AI launches successfully. Six months later, content is stale, errors have accumulated and users have migrated to workarounds. Nobody notices because nobody is monitoring. The system is technically running but functionally dead.

Every one of these failures is a governance failure, not a technology failure. And every one is preventable with the three-layer stack and feedback loop described above.

Related Article: Garbage In, Confidence Out: How Information Architecture Powers Enterprise Retrieval

Governance as Accelerator

Done right, governance is not overhead. It is an accelerator.

- Faster improvement: Clear processes mean errors get fixed in hours rather than festering for weeks.

- Sustainable trust: Users learn that problems get corrected, so they keep using the system even when they encounter occasional issues.

- Scalable quality: Governance that works at ten thousand documents works at a hundred thousand documents. Manual heroics do not.

- Continuous learning: Feedback loops mean the system improves over time rather than decaying. Every interaction makes the next one better.

This is the flywheel that Parts 1 and 2 of this series have been building toward. The integration infrastructure (Part 1) and the information architecture (Part 2) provide the foundation. Governance provides the operating model that keeps the foundation current, reliable and improving.

The Governance Question That Matters

Traditional governance asks: "How do we prevent mistakes?"

AI-era governance asks: "How do we detect and correct mistakes faster than our competitors?"

The shift is fundamental. AI will make errors. Content will go stale. Users will encounter problems. The question is whether your governance enables rapid response or creates organizational paralysis.

When AI gives a wrong answer, what happens next? If you can answer that question with a clear, fast and reliable process, you have governance that works. If you cannot, start building it now, before your GenAI initiative teaches users that AI cannot be trusted.

This is Part 3 of a three-part VKTR series on scaling enterprise AI. Part 1, "The Pilot Paradox," examined why integration complexity grows exponentially. Part 2, "Garbage In, Confidence Out," addressed the information architecture foundation that enterprise retrieval requires.

Learn how you can join our contributor community.