Key Takeaways

- Cerebras plans to sell 28 million shares at $115 to $125 each in a Nasdaq IPO.

- The AI chipmaker could reach a valuation of about $26.6 billion at the top of the range.

- The offering would give public investors another way to bet on AI infrastructure beyond Nvidia.

- Cerebras is shifting from selling hardware to operating cloud-based AI compute services.

Cerebras Systems is preparing to test just how much appetite public markets still have for AI infrastructure.

The Sunnyvale, California-based AI chipmaker is seeking to raise up to $3.5 billion in an initial public offering on the Nasdaq, according to an updated prospectus filed Monday. Cerebras plans to offer 28 million shares priced between $115 and $125 apiece, giving the company a potential valuation of roughly $26.6 billion at the top end of the range.

That would mark a step up from the company’s $23 billion private valuation in February, when it raised money from investors, including Advanced Micro Devices. It would also place Cerebras among the most closely watched technology IPOs of the current AI cycle — not because it's another software company promising AI productivity gains, but because it sits much closer to the physical bottleneck of the AI boom: compute.

Table of Contents

- A Public-Market Test for AI Infrastructure

- The Deal at a Glance

- From Hardware Sales to Cloud Compute

- Revenue Growth Gives Cerebras a Stronger Story

- Why the IPO Matters

A Public-Market Test for AI Infrastructure

After a long slowdown in technology IPOs, AI has reopened parts of the market for companies that can plausibly claim a seat in the infrastructure stack. Generative AI has created intense demand for chips, data centers, cloud capacity and power — the less glamorous machinery behind chatbots, copilots and AI agents.

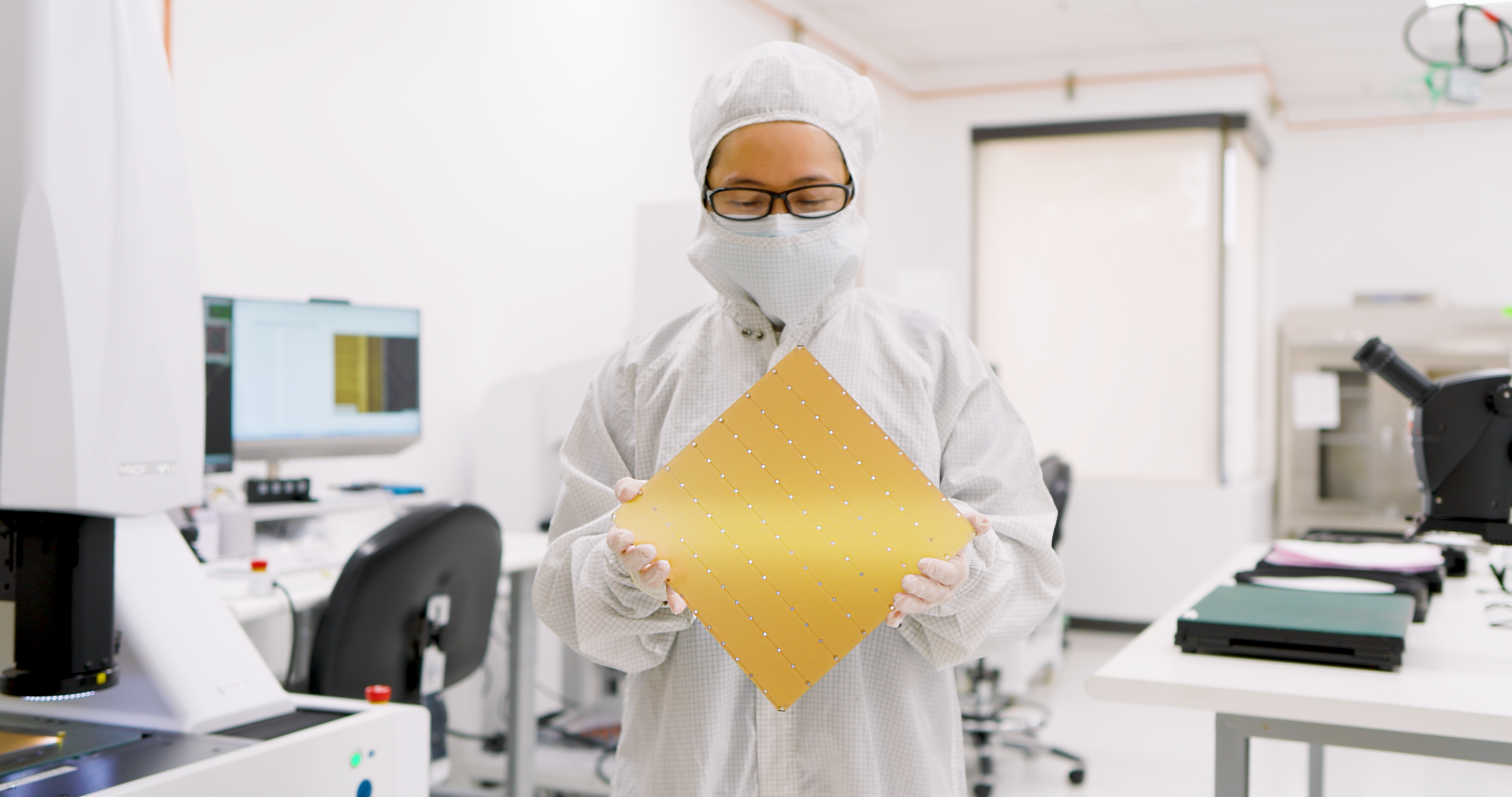

Cerebras aims to be one of the rare public-market alternatives to Nvidia’s dominance in AI infrastructure. Nvidia’s graphics processing units remain the default engine for much of the AI industry, but Cerebras has built its pitch around a different architecture: wafer-scale processors designed specifically for large-scale AI workloads.

Unlike conventional processors cut from a silicon wafer, Cerebras’ Wafer-Scale Engine uses an entire wafer as one giant processor. The company says the design brings compute and memory closer together, which can reduce some of the bottlenecks that slow AI training and inference.

That pitch now has to survive public-market scrutiny.

Related Article: OpenAI’s IPO Faces Questions Before It Even Begins

The Deal at a Glance

| IPO detail | Cerebras' plan |

|---|---|

| Exchange | Nasdaq Global Select Market |

| Expected ticker | CBRS |

| Shares offered | 28 million |

| Price range | $115 to $125 |

| Potential gross proceeds | Up to $3.5 billion |

| Potential valuation | Up to about $26.6 billion |

| Underwriter option | Additional 4.2 million shares |

If underwriters exercise their option to buy another 4.2 million shares, Cerebras could bring in an additional $525 million at the top of the range. Morgan Stanley, Citigroup, Barclays and UBS Investment Bank are listed as lead book-running managers for the offering.

Notably, co-founder and CEO Andrew Feldman is not selling shares in the IPO. After the offering, he is expected to hold 10.3 million shares, worth as much as $1.28 billion if the IPO prices at the high end.

From Hardware Sales to Cloud Compute

Cerebras’ IPO is also a story about a business model in transition.

The company previously sought to go public in September of 2024, then withdrew the paperwork as it shifted away from a more straightforward hardware sales model. Its current strategy leans more heavily into cloud services powered by its own processors — a move that makes Cerebras look less like a traditional semiconductor vendor and more like an AI infrastructure provider.

That transition matters because customers increasingly want access to compute capacity, not just ownership of hardware. AI labs and enterprises are trying to run larger models, serve more users and lower inference costs without waiting for the next wave of Nvidia GPU capacity.

Cerebras’ biggest validation point is its OpenAI relationship. In January, the company said it would provide up to 750 megawatts of AI computing power to OpenAI through 2028 in a deal valued at more than $20 billion.

That gives Cerebras a marquee customer at the center of the AI boom. It also creates a familiar IPO question: how much of the company’s growth depends on a small number of very large customers?

Revenue Growth Gives Cerebras a Stronger Story

Unlike many AI companies selling future potential, Cerebras is entering the IPO process with meaningful recent financial momentum.

The company reported fourth-quarter revenue of $510 million, up about 76% from the year-earlier period, and net income of $87.9 million for the quarter. That profitability snapshot could help Cerebras stand out in a market that has become more selective about high-growth companies burning cash.

The comparison point is CoreWeave, the AI cloud provider that rents access to Nvidia GPUs and raised $1.5 billion in an IPO last year. CoreWeave’s listing showed that investors were willing to back AI infrastructure companies even when profitability was still under pressure. Cerebras is now asking whether the same market will reward a company trying to reduce dependence on Nvidia hardware rather than monetize it.

Related Article: The Chips Cold War: How GPUs Became the World’s Most Valuable Political Resource

Why the IPO Matters

The Cerebras IPO will be a referendum on three big questions facing the AI market:

- Can specialized AI processors win investor confidence against Nvidia’s ecosystem advantage?

- Will public investors reward companies tied to AI infrastructure as strongly as private investors have?

- Can Cerebras turn its cloud pivot into a durable, scalable business?

For now, the company has the components investors tend to like: revenue growth, a massive AI customer, a differentiated technology story and exposure to one of the biggest capital spending cycles in tech.

But the risks are just as clear. Nvidia remains the center of gravity in AI infrastructure. Cloud services are expensive to build and operate. Large customer concentration can make revenue growth look more predictable than it really is. And AI valuations have already priced in a lot of future demand.

Cerebras hopes public investors still want another way into the AI compute boom. The IPO roadshow will show whether Wall Street sees it as a true Nvidia alternative or just another expensive bet on demand that still has to materialize.