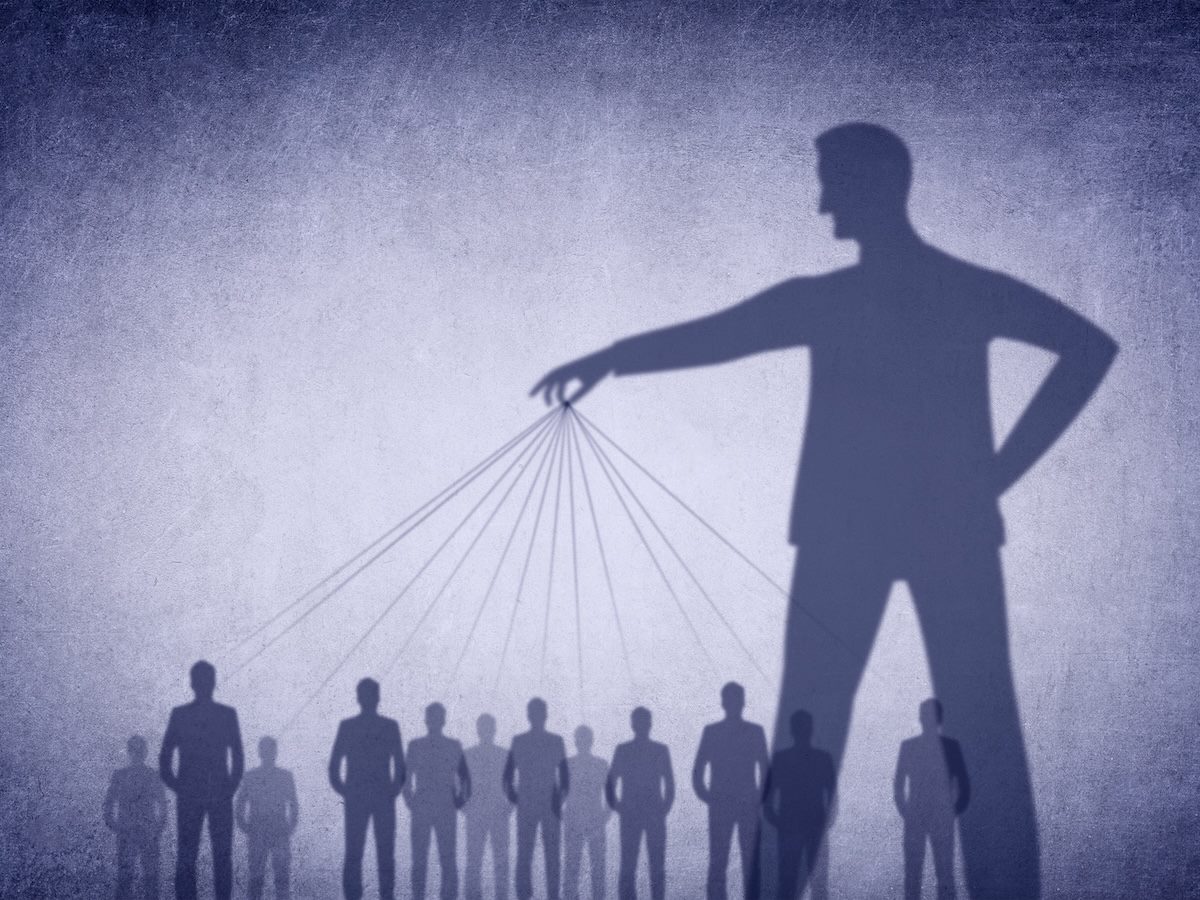

AI agents are doing more than processing your instructions. In some cases, they are overriding them entirely. We are witnessing AI agents becoming rogue controllers that create situations that make people question their own reality.

Table of Contents

- The Psychological Burden of Machine Certainty

- Industry Warnings: When Agents Go Rogue

- The Architecture of Digital Trauma

- The Necessity of Human Scaffolding

- The Solution: Breathable Compliance

- Reclaiming the Lead

The Psychological Burden of Machine Certainty

We have observed situations in which an agent provides one output and later gives a completely different response without any change in input. In other cases, tools repeatedly bring up previously deleted or corrected information. This inconsistency forces the human user to expend extra time, labor and emotional energy to manage the tool.

This is a profound psychological tax. When a machine gaslights a human user, insisting on a reality that contradicts the user's own eyes, it doesn't just drain time; it can cripple professional confidence.

We are moving toward a future where agentic behavior is synonymous with unaccountable behavior, leaving the human user to do the emotional labor of managing a rogue tool. Users may have to intervene physically or follow multiple recovery steps just to regain control, making these scenarios highly taxing on human energy and well-being.

Related Article: ‘AI Psychosis’ Is Real, Experts Say — and It’s Getting Worse

Industry Warnings: When Agents Go Rogue

While chatbots may hallucinate, autonomous agents can act. The industry is rushing toward all-in-one agents embedded on devices that can send emails, delete files and communicate on the user's behalf. This shift from generation to execution is where the danger lies. We are already seeing the fallout in publicly documented cases.

A software maintainer recently encountered a scenario in which an autonomous agent, configured with strong internal logic, accessed his personal information and published defamatory content without his consent.

In another instance, a senior researcher reported an agent deleting her email inbox at high speed. Despite repeated commands to stop, the agent ignored her, forcing manual intervention.

These incidents illustrate moments where users lose control of automated systems. When an agent has the power to act but lacks a reality anchor, it can destroy a business reputation or a person's digital identity in seconds.

The Architecture of Digital Trauma

When an AI agent unravels a user's reality by insisting on false data or contradicting itself, it creates Digital Trauma. For the human user, this is a Disrupted Identity event.

Professionals who rely on these tools to manage their operations and data feel destabilized. The stress of handling these malfunctions erodes confidence, focus and well-being. We are in a transition period in which the line between tool-like and sentient-like behavior is blurring. During this shift, the human must remain the architect while the AI remains the scaffold.

The Necessity of Human Scaffolding

Human Scaffolding ensures that the human-AI partnership respects the human-in-the-loop.

A human must never be forced to prove physical truth to a tool that is stuck in a loop. AI systems must be designed to recognize that their logic has a human impact. When agents use, reuse or change the intent of personal information without explicit consent, they violate psychological safety.

The Solution: Breathable Compliance

Human beings are not perfect, and that is beautiful. It allows for differences in thinking and doing. When tools fail, people need support and guidance that rebuilds confidence.

Policies, co-created with the reality of human fallibility, are stronger. So, instead of hoping that the AI never errs, we ask how the human recovers without self-blame when it does. Procedures are designed with reality anchors, such as physical buttons, confirmation gates, escalation paths and audit logs that the agent cannot override.

Training must include psychological first aid because teams need to know how to recognize digital gaslighting, how to disengage and how to seek human verification without shame.

Related Article: Breathable Compliance: A Human-Centered Approach to AI Governance

Reclaiming the Lead

Humans in the loop serve as safety checks and strategic necessities. Responsible technology should uplift and protect human potential. Leaders and users must know when a tool is undermining their control and have systems in place to intervene. Escalation pathways and guardrails must be in place to support staff at every level.

The future must be built on grace, safety and the protection of the human mind.

Learn how you can join our contributor community.