The AI industry has a power problem. As models grow larger and demand for compute skyrockets, the limiting factor is no longer semiconductor supply — it's the staggering amount of electricity needed to run and cool the hardware. One Los Angeles startup thinks the answer lies roughly 300 miles above our heads.

Orbital, a new space-infrastructure company, announced today that it has secured funding from a16z Speedrun to support its first test mission: a satellite carrying NVIDIA-powered servers into low Earth orbit, where it will attempt to run AI workloads using nothing but solar energy and the natural cold of space.

The mission, dubbed Orbital-1, is scheduled to launch aboard a SpaceX Falcon 9 rocket in April 2027.

Table of Contents

- The Grid Can't Keep Up With AI's Energy Appetite

- Training Needs Tight Clusters — Inference Can Go Orbital

- What Orbital-1's 2027 Mission Will Test

- Orbital Secures a16z Funding for First Mission

- From Electric Scooters to Orbital Servers

- Can Space Compete With Nuclear and Remote Grids?

The Grid Can't Keep Up With AI's Energy Appetite

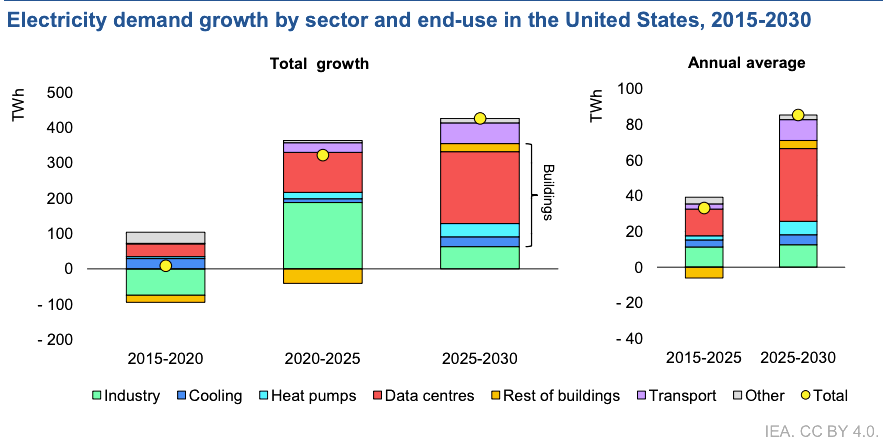

The global buildout of AI data centers is running headlong into grid limitations. Traditional facilities consume enormous quantities of electricity to both power GPUs and to cool them. According to the International Energy Agency, electricity consumption in the US is to set to rise by around 2% per year, more than twice the rate of the past decade, with data center expansions being a major driver.

Orbital's proposition is straightforward: rather than competing for scarce terrestrial power, generate it where it's abundant and free.

In sun-synchronous orbit, solar energy is available continuously — no weather, no nighttime, no grid dependency. And because space is a near-perfect thermal vacuum, heat from servers can be radiated away without the massive cooling systems that dominate data center costs on Earth.

"AI progress is being constrained by the grid," said Euwyn Poon, Orbital's CEO and founder. "Data center economics are dominated by electricity and cooling, and both are getting harder. In orbit, solar power is continuous and cooling is fundamentally different. Orbital is building compute infrastructure that removes the energy ceiling and scales with AI's potential."

Related Article: As AI Strains Resources, Data Centers Look Beyond the Surface

Training Needs Tight Clusters — Inference Can Go Orbital

Orbital isn't trying to replicate a hyperscale training cluster in orbit.

Training large AI models requires thousands of GPUs communicating at near-zero latency, tightly coupled in ways that don't translate well to satellite architecture. Inference, however, is a different story. Each request is processed independently, meaning capacity can be distributed across many nodes without the same interconnect demands.

Orbital is designing a constellation of satellites, each housing a server cluster, to handle inference workloads in parallel. The approach plays to the strengths of a distributed orbital network rather than fighting against its constraints.

What Orbital-1's 2027 Mission Will Test

The April 2027 mission has three main objectives:

- Sustained GPU operation in orbit: Validating that server hardware can function reliably in the space environment over extended periods

- Radiation hardening: Testing protections against the ionizing radiation that degrades electronics outside Earth's atmosphere

- Commercial AI inference: Running real inference workloads in space after the validation phase is complete

The company is also filing with the FCC to deploy a broader constellation of satellites for orbital AI compute infrastructure.

Orbital Secures a16z Funding for First Mission

Orbital's funding comes from a16z Speedrun, the rapid-deployment investment program run by venture giant Andreessen Horowitz.

"Speedrun backs founders to explore ambitious ideas — the harder the problem, the better," said Andrew Chen, General Partner at a16z Speedrun. "Orbital is taking on AI's biggest constraint with a bold and radical idea."

The company is also opening Factory-1, its research and development facility in Los Angeles, where it will design and manufacture the satellite hardware.

From Electric Scooters to Orbital Servers

Poon is no stranger to building physical infrastructure at scale. A Cornell-educated engineer and lawyer, he previously founded Spin, the micromobility company that deployed hundreds of thousands of electric vehicles across 100 cities before being acquired by Ford. Under his leadership, Spin grew to over $100 million in revenue.

After exiting Spin, Poon turned his attention to AI infrastructure investing — and saw the energy constraint bearing down on the industry.

"The energy ceiling on AI isn't theoretical, it's a real constraint that will impede the advancement of intelligence," Poon said. "This is the solution."

Related Article: Will Your Next Data Center Be in Space?

Can Space Compete With Nuclear and Remote Grids?

Orbital is entering a landscape where AI infrastructure demand influences energy policy, real estate markets and even geopolitics. Tech giants are signing nuclear power deals and scouting remote locations with cheap electricity just to keep pace with compute needs.

Whether space-based data centers can compete on cost, latency and reliability remains an open question. But if Orbital can prove that GPUs run reliably in orbit and that the economics pencil out, it could open an entirely new front in the race to build AI infrastructure.

The first real test comes in April 2027.