Every year, Stanford's Human-Centered AI (HAI) Institute releases its AI Index Report, and each time, the numbers surprise even those who spend their days watching this field closely.

The 2026 edition is no different. Across multiple categories spanning investment, workforce impact, scientific research, transparency and education, the report paints a picture of a technology advancing faster than the institutions, policies and oversight structures governing it.

What makes this year's findings worth sitting with is not any single data point, but the pattern that runs through them all: capability is outpacing accountability, and the gap is widening.

Here’s what the data says and why it matters.

Table of Contents

- 1. The Performance Leap Is Real, But So Are the Blind Spots

- 2. The US-China Gap Has Effectively Closed

- 3. Capital Is Flooding In, Transparency Is Draining Out

- 4. Young Workers Are Absorbing the Disruption First

- 5. AI Is Doing Real Science Now

- 6. Adoption Is Outrunning the Infrastructure to Support It

- 7. Public Trust Is Mixed, and the US Stands Out for Skepticism

- The Real Message from Stanford's AI Index Report

1. The Performance Leap Is Real, But So Are the Blind Spots

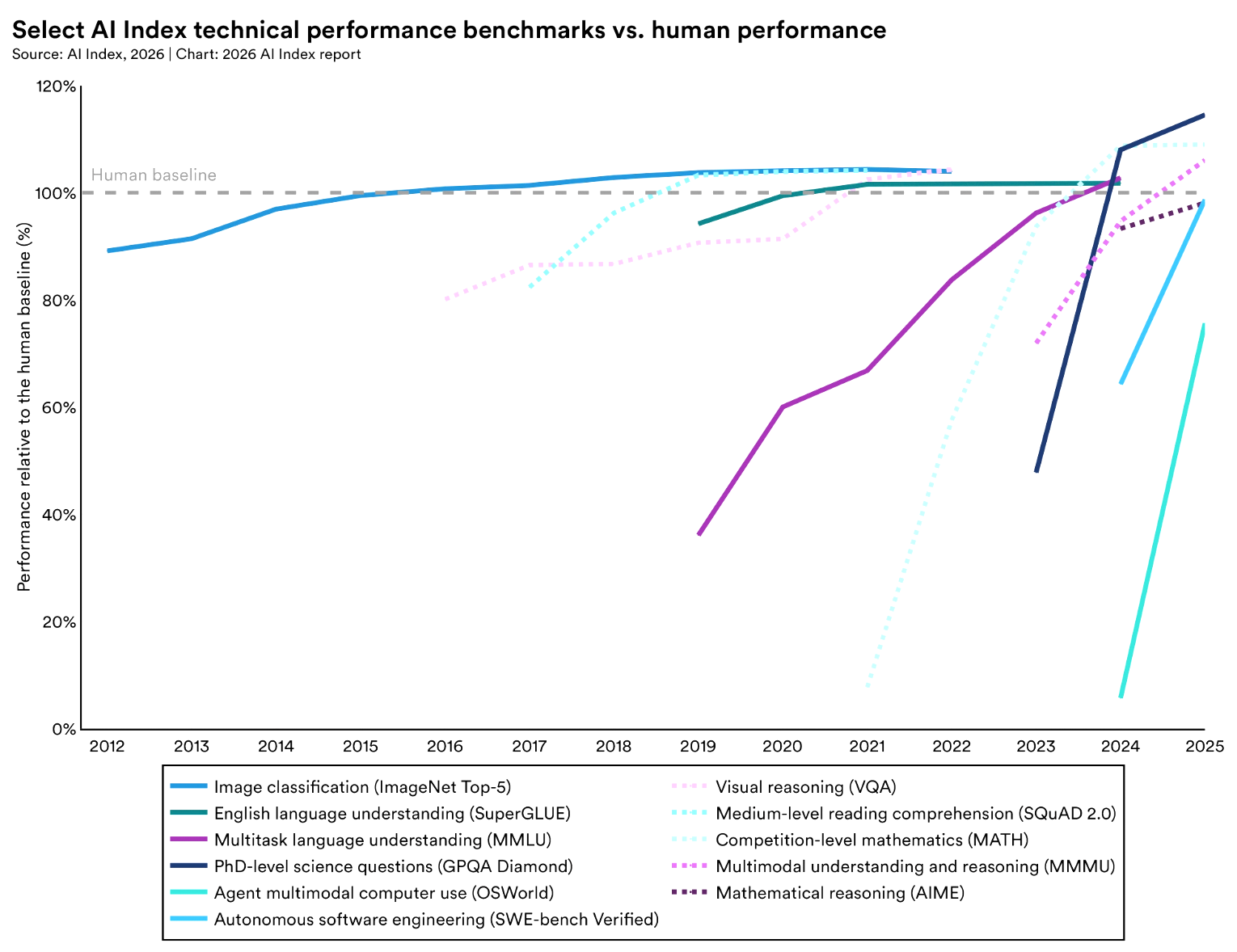

So let’s start with what AI can now do, now. In 2025, AI agents went from answering questions to completing tasks, though they still fail about 1 in 3 attempts on established benchmarks. Accuracy increased from about 12% to 66.3% on OSWorld, which tests agents on computer tasks across operating systems, within 6 percentage points of human performance.

In cybersecurity, AI agents went from solving 15% of problems in 2024 to 93% in 2025. And frontier models are at or exceeding human performance on PhD-science questions, competition math questions and multimodal reasoning. Those are not marginal gains. They amount to a quantum leap in what these systems can do within constrained and well-defined environments.

But the report also cautions where it all goes wrong. AI still struggles to reliably interpret time, is hopeless at multi-step planning and succeeds in only 12% of real household tasks.

One of the sharpest contrasts in the entire report is the distance between what AI can do in a benchmark and what it can do in a kitchen. This paints the picture that today's systems are only highly capable in narrow domains but quite brittle in unstructured environments. So, anyone building or deploying AI in operational settings needs to hold both things in mind at once.

Related Article: Humanity's Last Exam: The End of Traditional AI Benchmarks?

2. The US-China Gap Has Effectively Closed

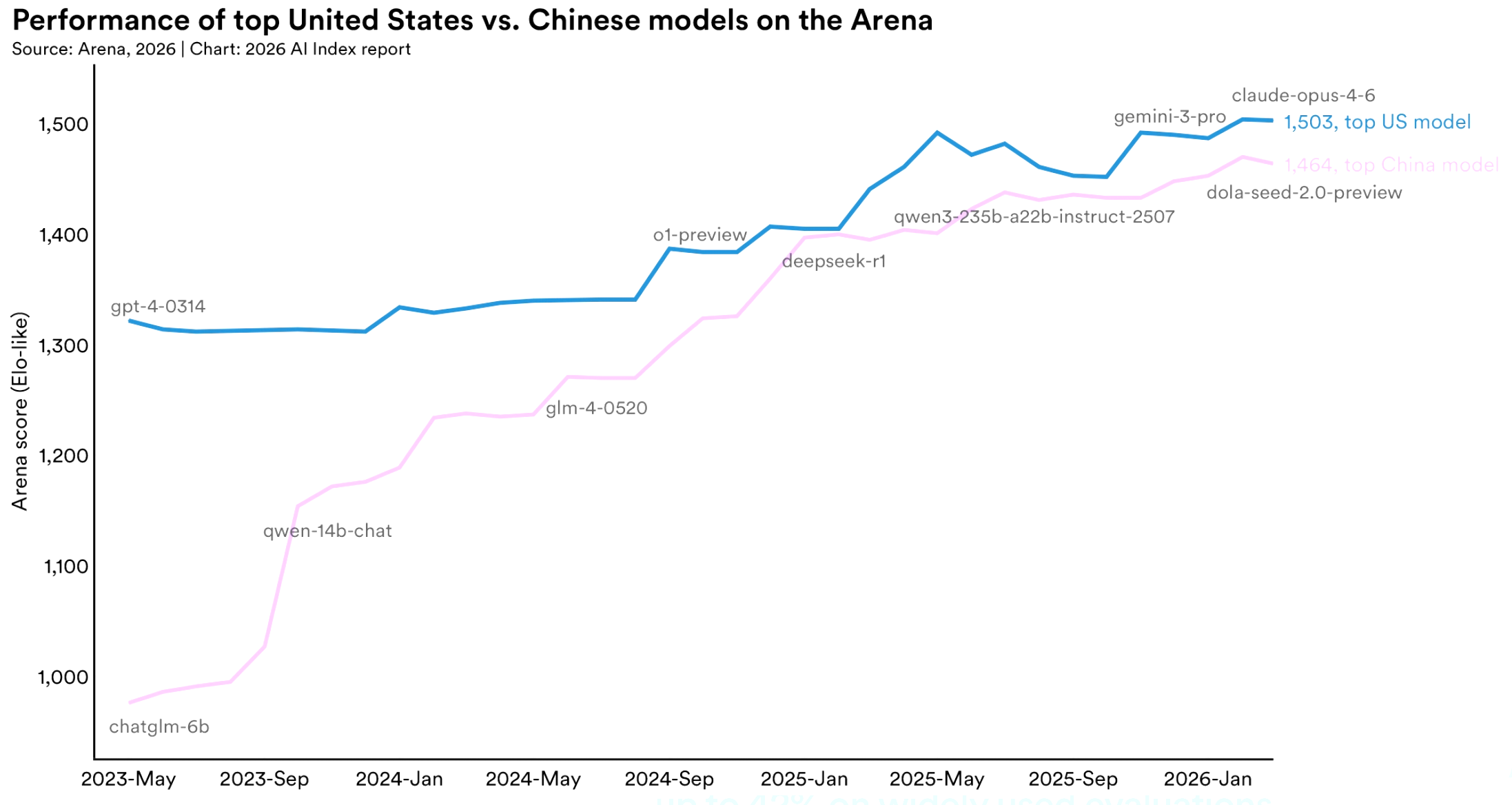

For years, the conventional wisdom was that the United States held a commanding and growing lead in AI. Stanford's 2026 report significantly complicates that story. As of March 2026, Anthropic's top model leads its nearest Chinese competitor by just 2.7% on performance benchmarks. DeepSeek-R1, released in February 2025, briefly matched the top US model fully.

While the US has maintained a steady lead in the production of top-tier AI models and high-impact patents, China leads in publication volume, total citations, patent output and industrial robot installations. What’s unfolding is not one country pulling ahead but two systems of innovation producing results in different ways.

Also worth noting is that the US is losing ground on talent. The number of AI scholars moving to the United States has fallen by 89% since 2017, and by 80% last year alone.

This isn’t to say the US in no longer attractive to top talent; America is still home to more AI researchers than any other country, but its lead is slipping fast, and it faces an urgent challenge.

3. Capital Is Flooding In, Transparency Is Draining Out

Global corporate investment in AI hit $581.7 billion in 2025, up 130% from the previous year. Private investments reached $344.7 billion, a 127.5% rise on 2024 levels. The US accounted for $285.9 billion of that sum; its private investment was 23.1x China's $12.4 billion.

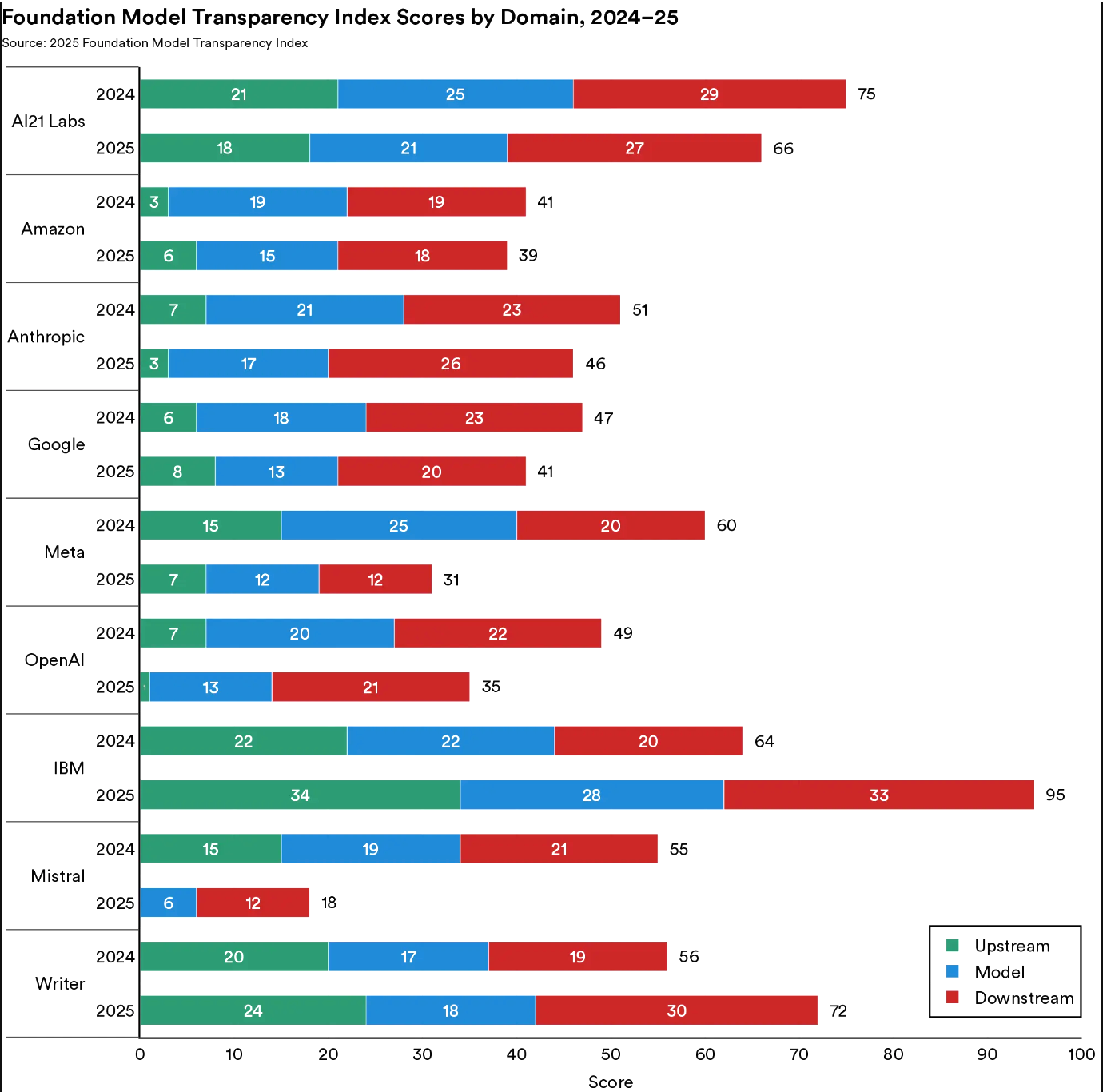

Yet that capital concentration is producing something unexpected: less openness, not more. The Foundation Model Transparency Index, which tracks how openly AI companies disclose details about training data, capabilities and risk, dropped from an average score of 58 to 40 in a single year. The report notes that the most capable models are now among the least transparent. As systems get better and the companies behind them grow larger, the impulse to protect competitive advantage is overriding the culture of open research that originally defined the field.

This opacity has consequences beyond competitive positioning. AI-related incidents now exceed 300 to 400 annually, and Juan N. Pava, Tech Ethics and Policy Research Fellow at Stanford's Ethics in Society program, cautions against reading that number in isolation.

The increase in incidents, he said, "should be interpreted in the context of unprecedented adoption, with generative AI reaching over half the population within three years. While this may partly reflect riskier deployments, gaps in responsible AI reporting make it difficult to assess underlying risk trends.”

Simply put: more incidents may mean more harm, or it may simply mean more use and more reporting. Without consistent measurement standards, the number alone doesn't tell us which.

Related Article: What Enterprise AI Experts Say Comes Before the Breakthrough

4. Young Workers Are Absorbing the Disruption First

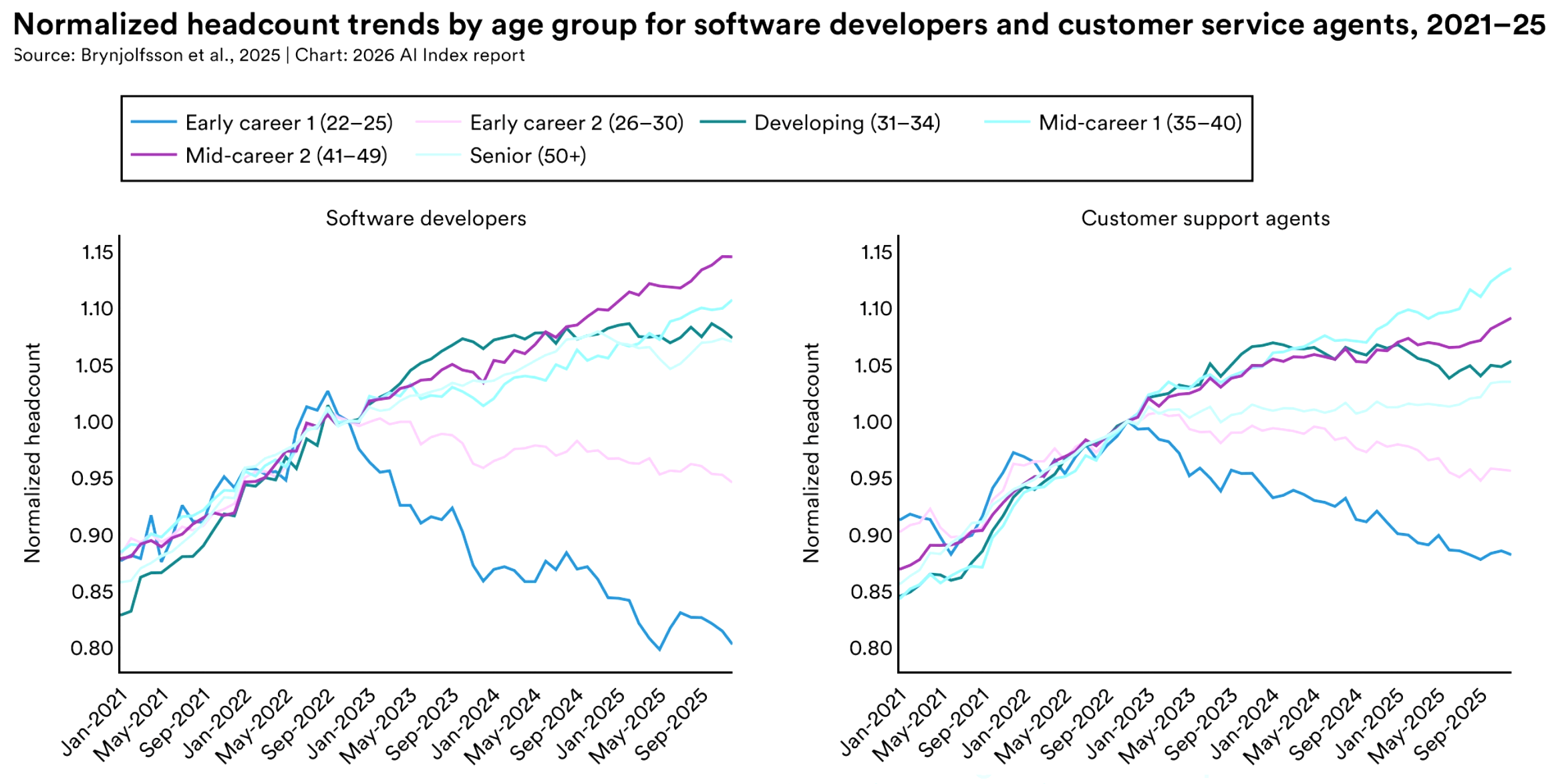

One of the most concrete findings in the report concerns the economy and workforce — specifically, who gets disrupted first. Employment for young software developers (between 22-25-years-old) has fallen by nearly 20% since 2024.

It’s the same story in customer service roles and other high-AI-exposure positions. All the while, executive surveys show that firms are plotting workforce reductions well beyond recent layoffs.

This is not a hypothetical future disruption. It's happening right now, and it's concentrated in entry-level positions. The people who would normally spend their early career building skills, professional networks and domain expertise are finding that pipeline contracting precisely when AI productivity gains are being cited most loudly. That creates a skills formation problem that won’t show up in productivity statistics for years, but will matter enormously when it does.

The World Economic Forum's Future of Jobs 2025 report estimated that AI could displace 85 million jobs while creating 97 million new ones globally. The net number looks positive until you look at who absorbs the displacement and who captures the creation. Stanford's data suggests they are not the same people.

5. AI Is Doing Real Science Now

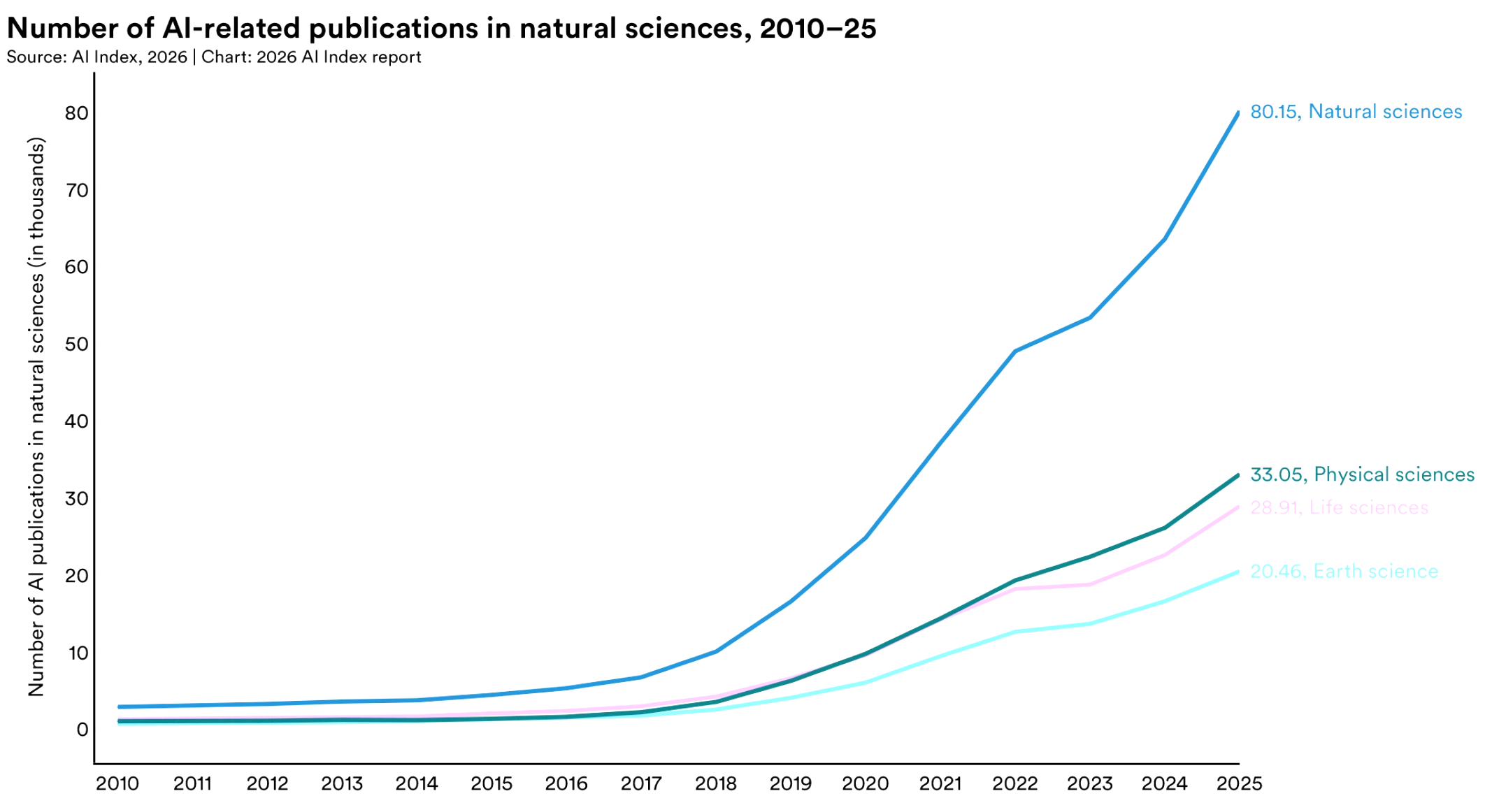

Buried beneath the investment figures and workforce totals is a finding that should have commanded more attention: AI is becoming a real contributor to science, and AI-related publications in the natural, physical and life sciences increased 26% to 28% YoY.

More importantly, the nature of that contribution is changing. In 2025, for example, AI ran a complete weather forecasting pipeline end-to-end for the first time, taking raw meteorological data and producing final predictions for temperature, wind and humidity without human input.

Astronomy built its first foundation model, AION-1, which was trained on over 200 million celestial objects from 5 major surveys.

Stanford's report also documents the rise of digital twins in medicine, dynamic computational models of individual patients that update in real time and support treatment planning. Publication counts in this area went from near zero in 2015 to 372 in 2025.

In clinical settings, physicians across multiple hospital systems reported spending up to 83% less time on documentation, with meaningful reductions in burnout. This is one area where the deployment story and the outcome data are actually aligned.

6. Adoption Is Outrunning the Infrastructure to Support It

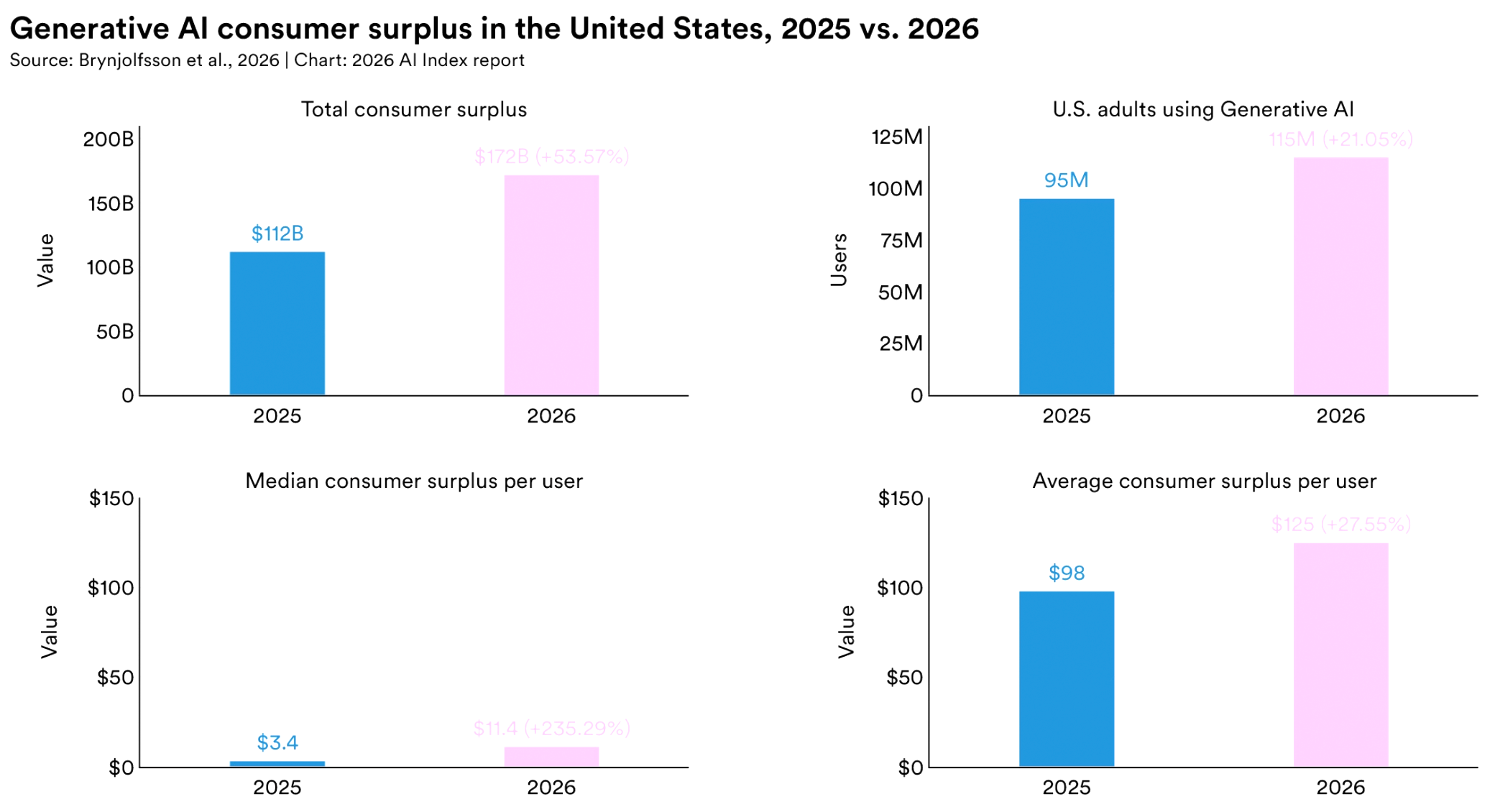

Generative AI has taken 3 years to reach 53% population adoption, faster than both the PC and the internet. The estimated value of these tools to US consumers alone reached $172 billion per year by early 2026, with median value per user tripling between 2025 and 2026. Adoption is not uniform: Singapore leads at 61%, the UAE is at 54% and the US ranks #24 at 28.3%.

In education, the adoption picture is particularly stark. 4 in 5 US high school and college students now use AI to complete school-related work, yet only half of middle and high schools report having any AI policy at all (and only 6% of teachers believe those policies are clear).

Matthew Wemyss, Assistant School Director at Cambridge School of Bucharest and founder of IN&ED, put the challenge in terms that school leaders can act on:

"The gap between 80% student use and 6% policy clarity isn't a crisis, it's an invitation. Students have shown us exactly where the demand is, and that's the easiest part of any change to act on. School leaders don't need to have all the answers, they need to give their staff permission to start, and the cover to learn as they go. The schools that move now won't get it perfect, but they'll be the ones their students actually trust to talk to about this."

Wemyss's framing rejects the paralysis that often follows such data. Policy lag is real, but waiting for perfect clarity before acting is its own form of failure.

Outside formal education, people are picking up AI skills independently. The UAE, Chile and South Africa are seeing the fastest growth in AI engineering skills acquisition. The learning is happening, it's just not organized around institutions that can systemically verify or build on it.

7. Public Trust Is Mixed, and the US Stands Out for Skepticism

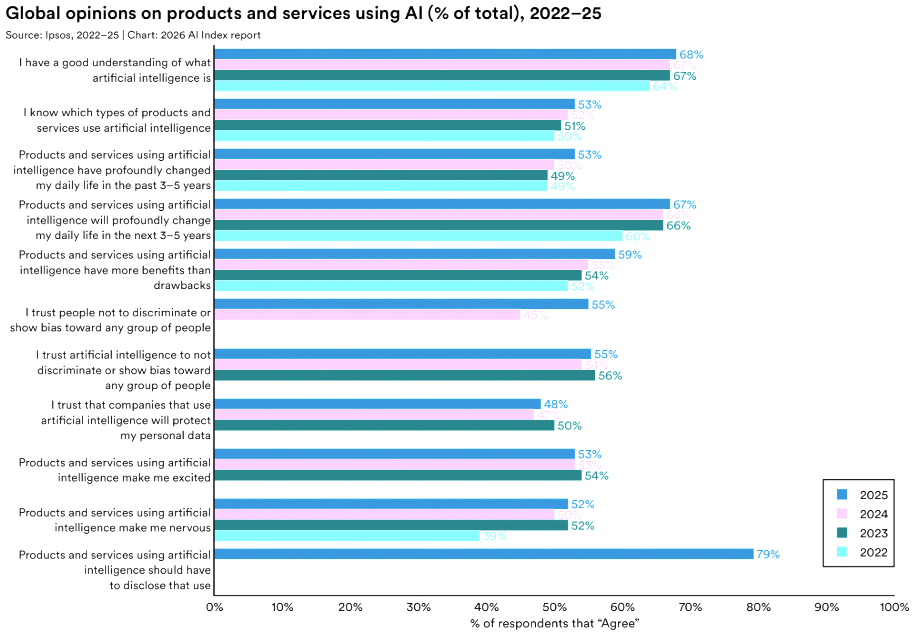

Globally, public opinion on AI use has been mixed, with 59% of people reporting optimism about AI's benefits, up from 52%. But nervousness is also ticking up, now at 52% — a 2% rise.

The US diverges from the global picture in some telling ways. Only 33% of Americans expect AI to improve their jobs, compared with a global average of 40%. And the US records the lowest level of trust in government to regulate AI among the countries surveyed (31%).

That trust gap looks different depending on where you sit in the world, though Juan N. Pava argues it's a more layered dynamic than simple optimism or fear. The speed of diffusion, he said, helps explain why trust is diverging globally rather than declining, aligning with higher use and optimism in emerging economies than in advanced ones.

"Together, these trends raise important questions for global AI governance, pointing to the need for context-sensitive approaches and stronger, more consistent measurement of responsible AI to better understand evolving risks."

Trust tracks use, and use is distributed unequally, noted Pava. Countries where AI is arriving as an opportunity show more confidence. Regions where AI disrupts established systems and labor markets show greater anxiety. Policy responses built from one side of that divide won’t serve the other.

Related Article: The Great AI Masquerade: Why Trust, Not Tech, Is Your Real Challenge

The Real Message from Stanford's AI Index Report

Individually, each of these findings is impressive. Collectively, they reveal a central theme: the technology is advancing, but the systems designed to govern it, share it and train people in it are not.

Investment in AI is at an all-time high, trust in it is not. Similarly, AI deployment continues to increase, but the development and use of policies related to it do not keep pace.

Now, the real question is whether the people reading this report are willing to respond to what it actually says, not just the parts they wanted to hear before.